The post discusses integrating Knowledge Graphs with Retrieval-Augmented Generation (RAG), specifically using Neo4j and LangGraph. It outlines an example setup where extracted document data forms a structured graph for querying. The system enables natural question-and-answer interactions through AI, enhancing information retrieval with graph relationships and embeddings.

Testing AI Agents with the watsonx Orchestrate Agent Developer Kit (ADK)- Evaluation Framework – A Hands-on Example

The post outlines using the Evaluation Framework in watsonx Orchestrate ADK to verify AI Agent behavior through a practical example: Galaxium Travels, a fictional booking system. It details setting up the environment, defining user Stories, generating synthetic Test Cases, and running evaluations, crucial for ensuring AI reliability and transparency.

Integrating watsonx Orchestrate Agent Chat in Web Apps

This blog post demonstrates the usage of the web channel functionality in watsonx Orchestrate, enabling the embedding of conversational AI agents into custom web applications. It guides users through setting up a remote environment, generating source code, and running a web server to invoke chat features, emphasizing ease of use and customization options.

REST API Usage with the watsonx Orchestrate Developer Edition locally: An Example

This post outlines the process of setting up a local watsonx Orchestrate server and invoking a simple agent via REST API using Python. It covers environment setup, Bearer token retrieval, agent ID listing, and code execution.

Build, Export & Import a watsonx Orchestrate Agent with the Agent Development Kit (ADK)

This post guides users through building an AI agent locally using the watsonx Orchestrate Agent Development Kit (ADK), exporting it from their local setup, and importing it into a remote instance on IBM Cloud. The process enhances local development while ensuring efficient production deployment.

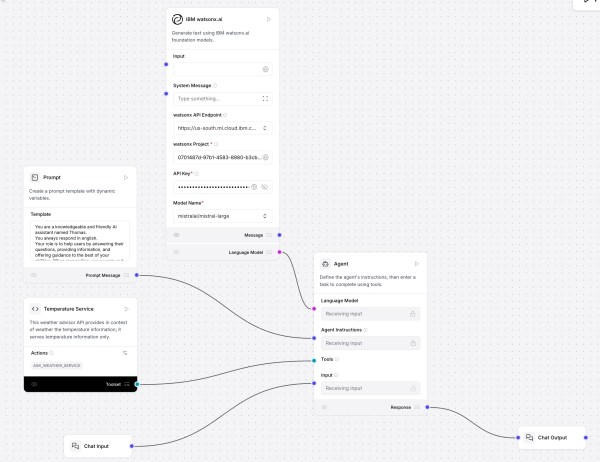

Create Your First AI Agent with Langflow and watsonx

This post shows how to use Langflow with watsonx.ai and a custom component for a “Temperature Service” that fetches and ranks live city temperatures. It covers installation, flow setup, agent prompting, tool integration, and interactive testing. Langflow’s visual design, MCP support, and extensibility offer rapid prototyping; future focus includes DevOps and version control.

Avoid the DCO error for your pull requests in a GitHub repository fork

The content provides a solution for resolving the 'DCO is missing' error encountered when forking a GitHub project. It outlines steps to amend commits with sign-off, including adding a commit-msg hook script. Successfully following these instructions helps ensure that your pull request functions correctly.

Getting Started with Local AI Agents in the watsonx Orchestrate Development Edition

The blog post outlines the process of setting up the Agent Developer Kit (ADK) to build and run AI agents locally using WatsonX Orchestrate Developer Edition. It involves setting up prerequisites, installing the necessary software, and loading an example agent—optional integration with Langfuse for observability.

The Rise of Agentic AI and Managing Expectations

This blog discusses the emergence of agentic AI, capable of planning and executing complex tasks autonomously, contrasting with traditional generative AI. The post emphasizes the importance of managing expectations, oversight, and ensuring transparency due to the unpredictability, including potential hallucinations associated with these systems. LangGraph is highlighted as a powerful tool for developing agentic workflows.

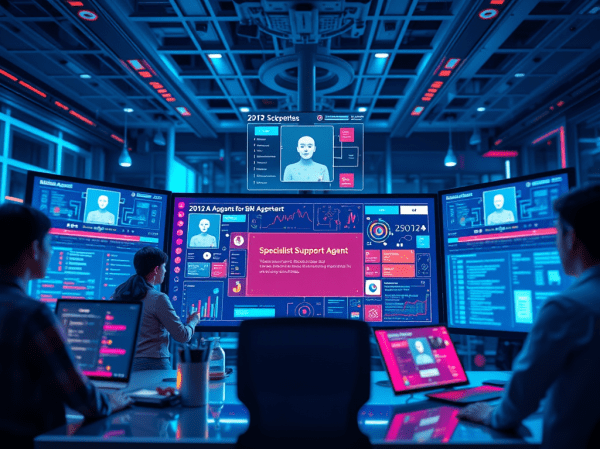

Supercharge Your Support: Example Build & Orchestrate AI Agents with watsonx.ai and watsonx Orchestrate

This post explains how to create, test, and integrate AI support agents using IBM's watsonx.ai and watsonx Orchestrate. It describes an example to integrate a Specialist Support Agent for DB2, into multi-agent orchestration, and highlights best practices for creating efficient agent workflows and accurate responses while anticipating potential complexities.