Podman is enhancing its capabilities in managing containers, allowing seamless integration with Kubernetes. This blog outlines how to configure a Podman machine, including creating a machine with specific resources and modifying configurations without deletion. It highlights essential commands like podman machine init and podman machine set.

Unlock watsonx Capabilities: Where do you start finding implementation examples when you are an AI engineer or developer?

IBM has launched the watsonx Developer Hub, consisting of four sections: Get Started, Capabilities, Guides, and Support. This Hub is a valuable resource for developers looking to learn about watsonx, emphasizing its significance in the development process.

An Example of how use the “Bee Agent Framework” (v0.0.33) with watsonx.ai

This blog post explores the Bee Agent Framework integration with watsonx.ai, detailing the setup process for a weather agent example on MacOS. It discusses necessary installations, environment variable configurations, and code updates needed due to framework changes. The execution output illustrates how the agent retrieves current weather data for Las Vegas.

IBM Granite for Code models are available on Hugging Face and ready to be used locally with “watsonx Code Assistant”

IBM Granite for Code models on Hugging Face are beneficial for developers, allowing seamless integration with VS Code. They support 116 programming languages and are available under an Apache 2.0 license.

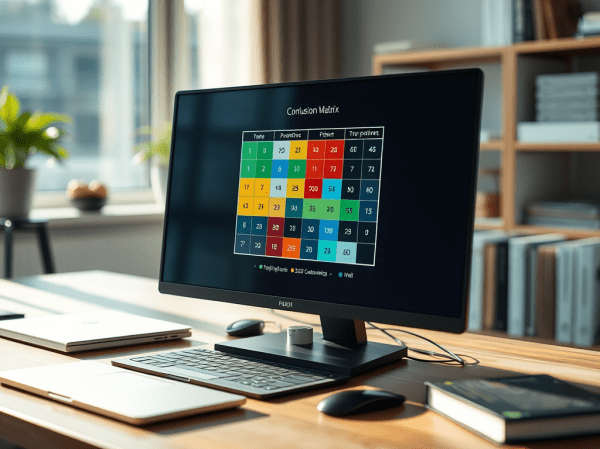

Land of Confusion using Classifications, and Metrics for a nonspecific Ground Truth

This blog post examines the Confusion Matrix as a metric for evaluating the performance of large language models (LLMs) in classification tasks, especially legal document analysis. It discusses the calculation of key classification metrics like Accuracy, Precision, Recall, and F1 score, emphasizing the challenges of using a broadly defined Ground Truth.

Enhance the LangChain AI Agent Weather Query Example with a Dependency Graph Visualization

This blog post demonstrates how to simply add a dependency graph to a runnable chain for a LangChain AI Agent example with WatsonxLLM for a Weather Queries application.

Implementing LangChain AI Agent with WatsonxLLM for a Weather Queries application

This blog post describes the customization of the LangChain AI Agent example from IBM Developer using Watsonx in Python. It demonstrates the implementation of a weather query application with detailed steps. The post offers insight into model parameters, creating prompts, agent chains, tool definitions, and execution. Additionally, it provides links to additional resources for further exploration.

Does it work to use ChatWatsonx from langchain_ibm to implement an agent that invokes functions?

The blog post explores integrating ChatWatsonx with LangChain for function calls, using a weather example. It aims to understand AI agent tools and actions. The process includes defining tools functions, creating WatsonxChat instance, and implementing a structured ChatPromptTemplate. While not fully successful, it highlights the importance of the prompt.

Experiment automation for models on inferences in InstructLab or watsonx

This content describes a framework for running experiments on models using InstructLab or watsonx.ai. The repository includes automation for a question-answering use case with LLM models. It outlines the setup, architecture, and usage of a Python application with shell automation, along with environment variables for configuration. Detailed instructions and links to the GitHub repository are provided for reference.

Integrating langchain_ibm with watsonx and LangChain for function calls: Example and Tutorial

The blog post demonstrates using the ChatWatsonx class of langchain_ibm for "function calls" with LangChain and IBM watsonx™ AI. It provides an example of a chat function call for weather information for various cities. The post also includes instructions to set up and run the example. Additional resources and examples are also provided.