This post discusses a local setup utilizing IBM Bob to generate an agent for watsonx Orchestrate, specifically with tools from the Galaxium Travels MCP server. It explains the architecture, customization of Bob, and integration with various components, providing both learning and practical implementation value for developers.

AI Grew on Open Knowledge — Will Its Success End That Openness?

This blog post explores the paradox of AI's growth potential versus the increasing trend toward data protectionism. It highlights how AI tools are hindered by data access limitations, posing risks to innovation. The observation implies that as data becomes more valuable, organizations may withhold it, undermining the openness that has historically fueled AI development.

Should MCP Replace REST for AI-Ready Applications?

The article explores the potential for using the Model Context Protocol (MCP) as a primary backend interface instead of traditional REST APIs in AI-enabled applications. Through the Galaxium Travels experiment, it examines the advantages and disadvantages of an MCP-first architecture, advocating for its use to reduce duplication and complexity while acknowledging REST's established role in many ecosystems.

Building a Reproducible AI-Generated Project with ChatGPT, Codex, and Docling in VS Code

A structured experiment using ChatGPT and Codex in VS Code to generate a reproducible open-source Docling preprocessing pipeline with strict engineering constraints.

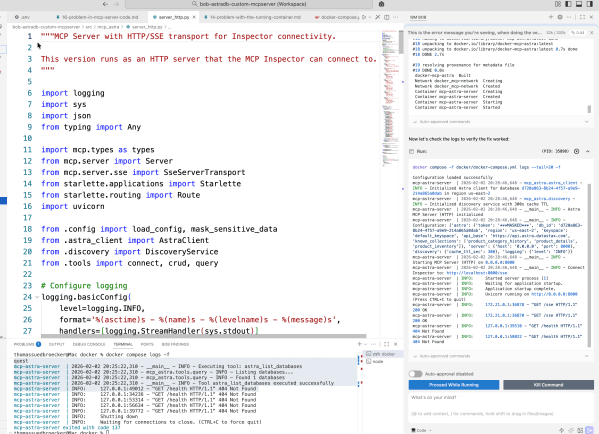

From first ideas to a working MCP server for Astra DB CRUD tools

This blog post details the author's exploration of IBM Bob while building an MCP server for Astra DB. It emphasizes learning through experimentation in Code Mode, focusing on automation and iterative development. The author shares insights on prompt creation, workflow challenges, and the importance of documentation throughout the process, ultimately achieving a functional server setup.

My First Hands-On Experience with IBM Bob: From Planning to RAG Implementation

In this post, I share my initial experiences with IBM Bob, an AI SDLC tool. By discuss setting it up with VS Code, its configurable modes, and key features. I show some details using IBM Bob to build a RAG system, highlighting its impressive support for planning, coding, and documentation, enhancing workflow efficiency. Introduction First... Continue Reading →

RAG is Dead … Long Live RAG

The post explains why traditional Retrieval-Augmented Generation (RAG) approaches no longer scale and how modern architectures, including GraphRAG, address these limitations. It highlights why data quality, metadata, and disciplined system design matter more than models or frameworks, and provides a practical foundation for building robust RAG systems, illustrated with IBM technologies but applicable far beyond them.

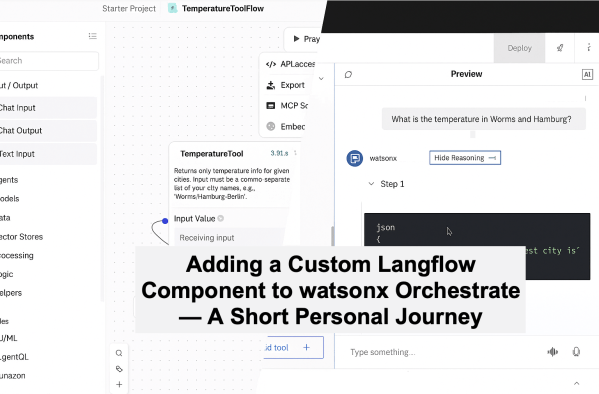

Adding a Custom Langflow Component to watsonx Orchestrate — A Short Personal Journey

This blog post outlines a practical example of setting up a custom component in Langflow to connect with an external weather API and import it into the watsonx Orchestrate Development Edition. The process emphasizes learning through experimentation rather than achieving a flawless solution, highlighting the potential of Langflow and watsonx Orchestrate for AI development.

How to Build a Knowledge Graph RAG Agent Locally with Neo4j, LangGraph, and watsonx.ai

The post discusses integrating Knowledge Graphs with Retrieval-Augmented Generation (RAG), specifically using Neo4j and LangGraph. It outlines an example setup where extracted document data forms a structured graph for querying. The system enables natural question-and-answer interactions through AI, enhancing information retrieval with graph relationships and embeddings.

Testing AI Agents with the watsonx Orchestrate Agent Developer Kit (ADK)- Evaluation Framework – A Hands-on Example

The post outlines using the Evaluation Framework in watsonx Orchestrate ADK to verify AI Agent behavior through a practical example: Galaxium Travels, a fictional booking system. It details setting up the environment, defining user Stories, generating synthetic Test Cases, and running evaluations, crucial for ensuring AI reliability and transparency.