This post is about setup to utilize Granite 4 models in Ollama for VS Code with watsonx Code Assistant. The process includes inspecting available models, uninstalling old versions, installing new models, and configuring them for effective use. The experience emphasizes exploration and learning in a private, efficient AI development environment.

Access watsonx Orchestrate functionality over an MCP server

The Model Context Protocol (MCP) is being increasingly utilized in AI applications, particularly with the watsonx Orchestrate ADK. This setup allows users to develop and manage agents and tools through a seamless integration of the MCP server and the Development Edition, enhancing user interaction and functionality in coding environments.

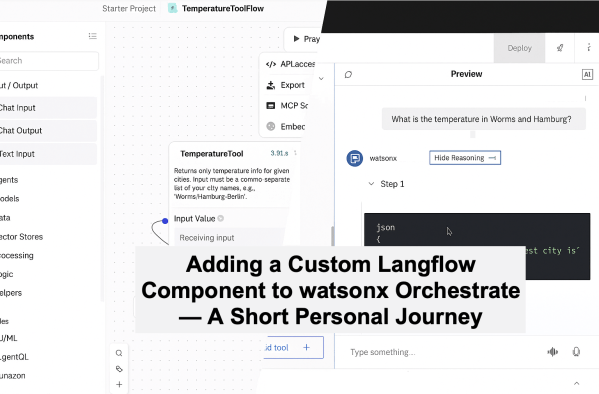

Adding a Custom Langflow Component to watsonx Orchestrate — A Short Personal Journey

This blog post outlines a practical example of setting up a custom component in Langflow to connect with an external weather API and import it into the watsonx Orchestrate Development Edition. The process emphasizes learning through experimentation rather than achieving a flawless solution, highlighting the potential of Langflow and watsonx Orchestrate for AI development.

It’s All About Risk-Taking: Why “Trustworthy” Beats “Deterministic” in the Era of Agentic AI

This post explores how Generative AI and Agentic AI emphasize trustworthiness over absolute determinism. As AI's role in enterprises evolves, organizations must focus on building reliable systems that operate under risk, balancing innovation with accountability. A personal perspective.

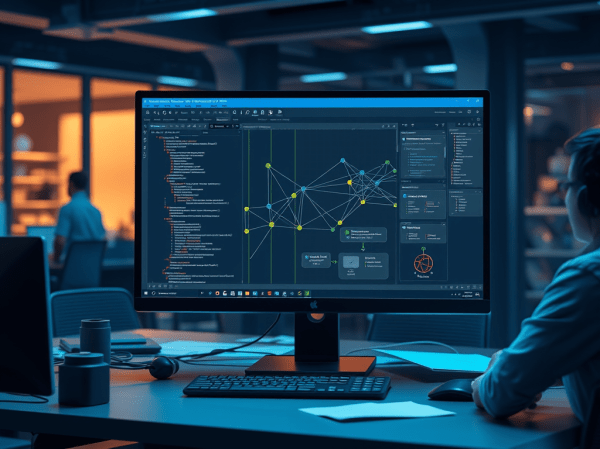

How to Build a Knowledge Graph RAG Agent Locally with Neo4j, LangGraph, and watsonx.ai

The post discusses integrating Knowledge Graphs with Retrieval-Augmented Generation (RAG), specifically using Neo4j and LangGraph. It outlines an example setup where extracted document data forms a structured graph for querying. The system enables natural question-and-answer interactions through AI, enhancing information retrieval with graph relationships and embeddings.

Build, Export & Import a watsonx Orchestrate Agent with the Agent Development Kit (ADK)

This post guides users through building an AI agent locally using the watsonx Orchestrate Agent Development Kit (ADK), exporting it from their local setup, and importing it into a remote instance on IBM Cloud. The process enhances local development while ensuring efficient production deployment.

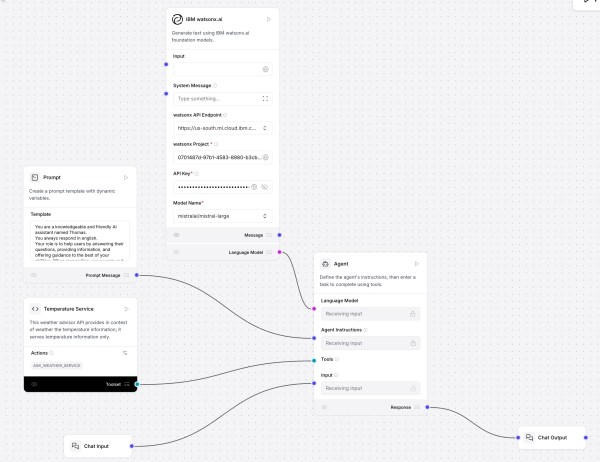

Create Your First AI Agent with Langflow and watsonx

This post shows how to use Langflow with watsonx.ai and a custom component for a “Temperature Service” that fetches and ranks live city temperatures. It covers installation, flow setup, agent prompting, tool integration, and interactive testing. Langflow’s visual design, MCP support, and extensibility offer rapid prototyping; future focus includes DevOps and version control.

Getting Started with Local AI Agents in the watsonx Orchestrate Development Edition

The blog post outlines the process of setting up the Agent Developer Kit (ADK) to build and run AI agents locally using WatsonX Orchestrate Developer Edition. It involves setting up prerequisites, installing the necessary software, and loading an example agent—optional integration with Langfuse for observability.

Exploring the “AI Operational Complexity Cube idea” for Testing Applications integrating LLMs

The post explores the integration of Large Language Models (LLMs) in applications, stressing the need for effective production testing. It introduces the AI Operational Complexity Cube concept, emphasizing new testing dimensions for LLMs, including prompt testing and user engagement. A structured testing approach is proposed to ensure reliability and robustness.

Deploying an InstructLab Fine-Tuned Model on IBM watsonx Inference: A SaaS Guide

This blog post explains how to deploy a fine-tuned model to IBM watsonx on IBM Cloud. It highlights the advantages of using this platform, such as avoiding infrastructure management and ensuring enterprise security, as well as detailed steps for configuration, deployment, and accessing the model from IBM watsonx.