This post discusses a local setup utilizing IBM Bob to generate an agent for watsonx Orchestrate, specifically with tools from the Galaxium Travels MCP server. It explains the architecture, customization of Bob, and integration with various components, providing both learning and practical implementation value for developers.

AI Grew on Open Knowledge — Will Its Success End That Openness?

This blog post explores the paradox of AI's growth potential versus the increasing trend toward data protectionism. It highlights how AI tools are hindered by data access limitations, posing risks to innovation. The observation implies that as data becomes more valuable, organizations may withhold it, undermining the openness that has historically fueled AI development.

Should MCP Replace REST for AI-Ready Applications?

The article explores the potential for using the Model Context Protocol (MCP) as a primary backend interface instead of traditional REST APIs in AI-enabled applications. Through the Galaxium Travels experiment, it examines the advantages and disadvantages of an MCP-first architecture, advocating for its use to reduce duplication and complexity while acknowledging REST's established role in many ecosystems.

Building a Reproducible AI-Generated Project with ChatGPT, Codex, and Docling in VS Code

A structured experiment using ChatGPT and Codex in VS Code to generate a reproducible open-source Docling preprocessing pipeline with strict engineering constraints.

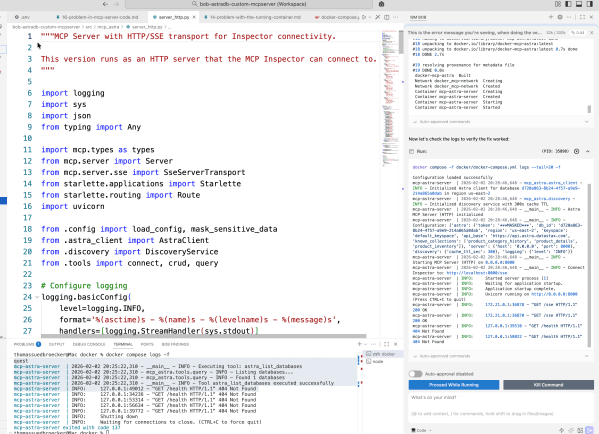

From first ideas to a working MCP server for Astra DB CRUD tools

This blog post details the author's exploration of IBM Bob while building an MCP server for Astra DB. It emphasizes learning through experimentation in Code Mode, focusing on automation and iterative development. The author shares insights on prompt creation, workflow challenges, and the importance of documentation throughout the process, ultimately achieving a functional server setup.

A Bash Cheat Sheet: Adding a Local Ollama Model to watsonx Orchestrate

The post discusses automating local testing of IBM watsonx Orchestrate with Ollama models using a Bash script. The script simplifies the setup process, ensuring proper connections and configurations. It initiates services, confirms model accessibility, reducing typical setup errors.

A Bash Cheat Sheet: Adding a Model to Local watsonx Orchestrate

The this post describes a Bash automation script for setting up the IBM watsonx Orchestrate Development Edition. The script automates tasks like resetting the environment, starting the server, and configuring credentials, allowing for a more efficient workflow. It addresses common setup issues, ensuring a repeatable and successful process.

RAG is Dead … Long Live RAG

The post explains why traditional Retrieval-Augmented Generation (RAG) approaches no longer scale and how modern architectures, including GraphRAG, address these limitations. It highlights why data quality, metadata, and disciplined system design matter more than models or frameworks, and provides a practical foundation for building robust RAG systems, illustrated with IBM technologies but applicable far beyond them.

Update Ollama to use Granite 4 in VS Code with watsonx Code Assistant

This post is about setup to utilize Granite 4 models in Ollama for VS Code with watsonx Code Assistant. The process includes inspecting available models, uninstalling old versions, installing new models, and configuring them for effective use. The experience emphasizes exploration and learning in a private, efficient AI development environment.

Access watsonx Orchestrate functionality over an MCP server

The Model Context Protocol (MCP) is being increasingly utilized in AI applications, particularly with the watsonx Orchestrate ADK. This setup allows users to develop and manage agents and tools through a seamless integration of the MCP server and the Development Edition, enhancing user interaction and functionality in coding environments.