This post discusses a local setup utilizing IBM Bob to generate an agent for watsonx Orchestrate, specifically with tools from the Galaxium Travels MCP server. It explains the architecture, customization of Bob, and integration with various components, providing both learning and practical implementation value for developers.

AI Grew on Open Knowledge — Will Its Success End That Openness?

This blog post explores the paradox of AI's growth potential versus the increasing trend toward data protectionism. It highlights how AI tools are hindered by data access limitations, posing risks to innovation. The observation implies that as data becomes more valuable, organizations may withhold it, undermining the openness that has historically fueled AI development.

Should MCP Replace REST for AI-Ready Applications?

The article explores the potential for using the Model Context Protocol (MCP) as a primary backend interface instead of traditional REST APIs in AI-enabled applications. Through the Galaxium Travels experiment, it examines the advantages and disadvantages of an MCP-first architecture, advocating for its use to reduce duplication and complexity while acknowledging REST's established role in many ecosystems.

Building a Reproducible AI-Generated Project with ChatGPT, Codex, and Docling in VS Code

A structured experiment using ChatGPT and Codex in VS Code to generate a reproducible open-source Docling preprocessing pipeline with strict engineering constraints.

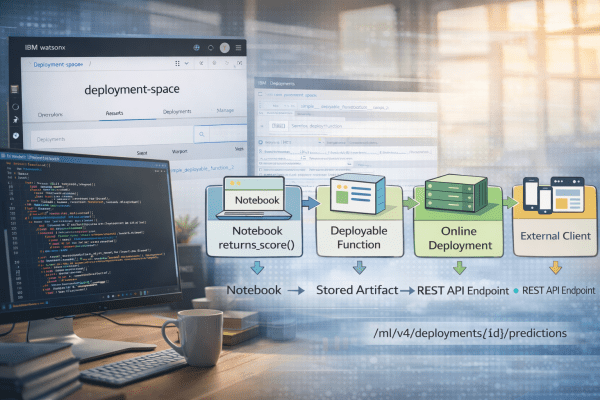

Cheat Sheet: Deploying a Function in watsonx.ai Studio – A Step-by-Step Guide

This post outlines the process of creating a deployable function in Jupyter Notebook using watsonx.ai, which is then exposed via a REST API. It emphasizes the separation of development in projects and runtime in deployment spaces, detailing steps from environment setup to function deployment and usage of the API endpoint.

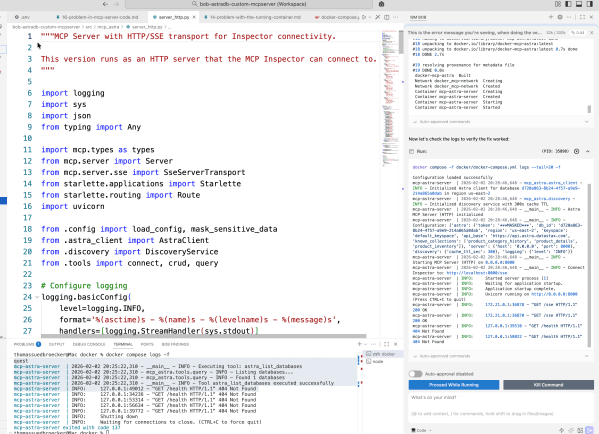

From first ideas to a working MCP server for Astra DB CRUD tools

This blog post details the author's exploration of IBM Bob while building an MCP server for Astra DB. It emphasizes learning through experimentation in Code Mode, focusing on automation and iterative development. The author shares insights on prompt creation, workflow challenges, and the importance of documentation throughout the process, ultimately achieving a functional server setup.

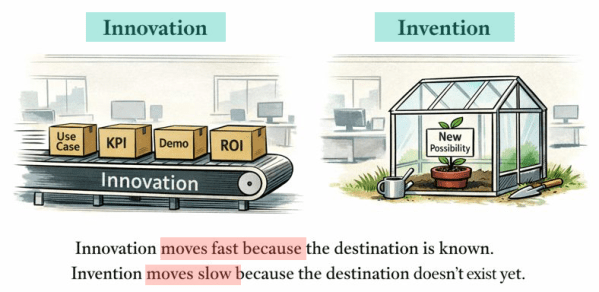

Innovation Is Eating Invention — and GenAI Is Accelerating It

The post discusses how the current focus on fast, outcome-driven innovation in the GenAI landscape risks sidelining invention, which nurtures genuine new possibilities. It emphasizes that while innovation thrives in KPI-oriented settings, invention often struggles for justification. The author calls for a deliberate balance to preserve the space for invention in future developments.

A Bash Cheat Sheet: Adding a Local Ollama Model to watsonx Orchestrate

The post discusses automating local testing of IBM watsonx Orchestrate with Ollama models using a Bash script. The script simplifies the setup process, ensuring proper connections and configurations. It initiates services, confirms model accessibility, reducing typical setup errors.

A Bash Cheat Sheet: Adding a Model to Local watsonx Orchestrate

The this post describes a Bash automation script for setting up the IBM watsonx Orchestrate Development Edition. The script automates tasks like resetting the environment, starting the server, and configuring credentials, allowing for a more efficient workflow. It addresses common setup issues, ensuring a repeatable and successful process.

My First Hands-On Experience with IBM Bob: From Planning to RAG Implementation

In this post, I share my initial experiences with IBM Bob, an AI SDLC tool. By discuss setting it up with VS Code, its configurable modes, and key features. I show some details using IBM Bob to build a RAG system, highlighting its impressive support for planning, coding, and documentation, enhancing workflow efficiency. Introduction First... Continue Reading →