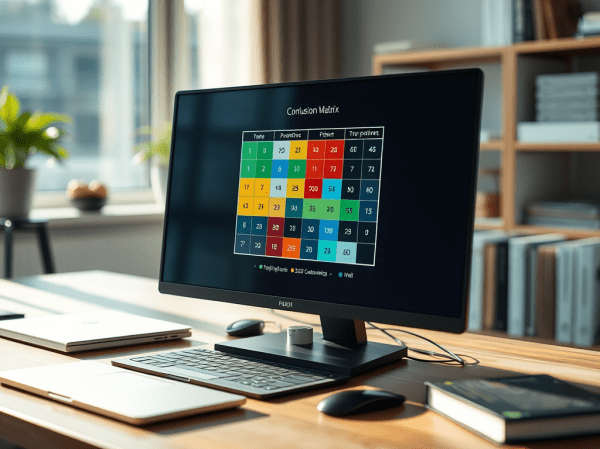

This blog post examines the Confusion Matrix as a metric for evaluating the performance of large language models (LLMs) in classification tasks, especially legal document analysis. It discusses the calculation of key classification metrics like Accuracy, Precision, Recall, and F1 score, emphasizing the challenges of using a broadly defined Ground Truth.

Enhance the LangChain AI Agent Weather Query Example with a Dependency Graph Visualization

This blog post demonstrates how to simply add a dependency graph to a runnable chain for a LangChain AI Agent example with WatsonxLLM for a Weather Queries application.

Implementing LangChain AI Agent with WatsonxLLM for a Weather Queries application

This blog post describes the customization of the LangChain AI Agent example from IBM Developer using Watsonx in Python. It demonstrates the implementation of a weather query application with detailed steps. The post offers insight into model parameters, creating prompts, agent chains, tool definitions, and execution. Additionally, it provides links to additional resources for further exploration.

Integrating langchain_ibm with watsonx and LangChain for function calls: Example and Tutorial

The blog post demonstrates using the ChatWatsonx class of langchain_ibm for "function calls" with LangChain and IBM watsonx™ AI. It provides an example of a chat function call for weather information for various cities. The post also includes instructions to set up and run the example. Additional resources and examples are also provided.

InstructLab and Taxonomy tree: LLM Foundation Model Fine-tuning Guide | Musician Example

The blog post introduces InstructLab, a project by IBM and Red Hat, outlining the fine-tuning process of the model "MODELS/MERLINITE-7B-LAB-Q4_K_M.GGUF." This involves data preparation, model training, testing, and conversion, finally serving the model to verify its accuracy, by using a personal musician example.

Fine-tune LLM foundation models with the InstructLab an Open-Source project introduced by IBM and Red Hat

This blog post provides a step-by-step guide to setting up InstructLab CLI on an Apple Laptop with an Apple M3 chip, including an overview of InstructLab and its benefits. It also mentions supported models and detailed setup instructions. Additionally, it refers to a Red Hat YouTube demonstration and highlights the project's potential impact.

Using CUDA and Llama-cpp to Run a Phi-3-Small-128K-Instruct Model on IBM Cloud VSI with GPUs

The popularity of llama.cpp and optimized GGUF format for models is growing. This post outlines steps to run "Phi-3-Small-128K-Instruct" in GGUF format with llama.cpp on an IBM Cloud VSI with GPUs and Ubuntu 22.04. It covers VSI setup, CUDA toolkit, compilation, Python environment, model usage, and additional resources.

Getting started with Text Generation Inference (TGI) using a container to serve your LLM model

This blog post outlines a bash automation for setting up and testing Text Generation Inference (TGI) using a container. It provides instructions for creating a Python test client, starting the TGI server, and troubleshooting common issues. The post emphasizes the benefits of using containers and references the Hugging Face and Nvidia technologies.

How to create a FastAPI server to use OpenAI models

Last time, I wrote a blog post about "IBM Watsonx.ai and a simple question-answering pipeline using Python and FastAPI", and I had an exchange with my family about an OpenAI sample for a FastAPI application, so I created a small FastAPI server to access OpenAI with Python.

How to set up Caikit and use Hugging Face models examples

This small blog post is about how to set up a demo environment for using Caikit and Hugging Face models on your local machine.