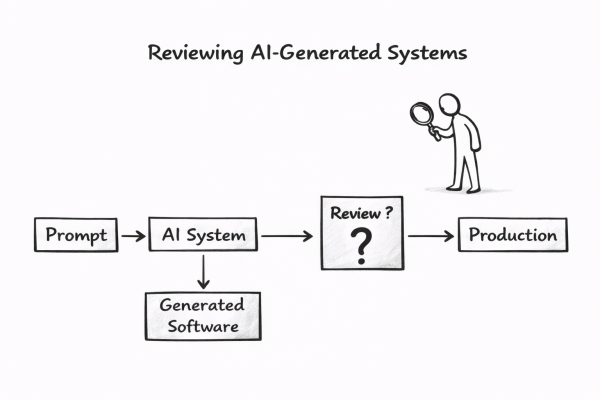

AI is transforming the software development lifecycle, shifting focus from coding to reviewing AI-generated systems. While AI tools simplify software generation, building trustworthy systems remains complex. Traditional review processes may no longer suffice. This raises a critical question: how can humans responsibly.

Exploring the “AI Operational Complexity Cube idea” for Testing Applications integrating LLMs

The post explores the integration of Large Language Models (LLMs) in applications, stressing the need for effective production testing. It introduces the AI Operational Complexity Cube concept, emphasizing new testing dimensions for LLMs, including prompt testing and user engagement. A structured testing approach is proposed to ensure reliability and robustness.