The blog post explores integrating ChatWatsonx with LangChain for function calls, using a weather example. It aims to understand AI agent tools and actions. The process includes defining tools functions, creating WatsonxChat instance, and implementing a structured ChatPromptTemplate. While not fully successful, it highlights the importance of the prompt.

Integrating langchain_ibm with watsonx and LangChain for function calls: Example and Tutorial

The blog post demonstrates using the ChatWatsonx class of langchain_ibm for "function calls" with LangChain and IBM watsonx™ AI. It provides an example of a chat function call for weather information for various cities. The post also includes instructions to set up and run the example. Additional resources and examples are also provided.

Using CUDA and Llama-cpp to Run a Phi-3-Small-128K-Instruct Model on IBM Cloud VSI with GPUs

The popularity of llama.cpp and optimized GGUF format for models is growing. This post outlines steps to run "Phi-3-Small-128K-Instruct" in GGUF format with llama.cpp on an IBM Cloud VSI with GPUs and Ubuntu 22.04. It covers VSI setup, CUDA toolkit, compilation, Python environment, model usage, and additional resources.

Python PDF to JSON Conversion for Efficient Data Pre-processing

Converting PDF to JSON is a simple task that uses Python. Converting can be helpful in various pre-processing situations involving data.

Getting started with Text Generation Inference (TGI) using a container to serve your LLM model

This blog post outlines a bash automation for setting up and testing Text Generation Inference (TGI) using a container. It provides instructions for creating a Python test client, starting the TGI server, and troubleshooting common issues. The post emphasizes the benefits of using containers and references the Hugging Face and Nvidia technologies.

CheatSheet: How to ensure you use the right Python environment in VS Code interpreter settings?

This post covers to ensure you set the virtual environment for Python in VS Code using venv. It details creating and activating a Python venv, and ensuring it’s used in VS Code environments. The steps include opening the VS Code command palette, selecting an interpreter, and navigating to the pyvenv.cfg file.

Unleash your creativity and design a custom visualization for the Shelly 3EM device with Grafana

The blog post details an example implementation of a connection server using Shelly 3EM, IBM Cloud Cloudant, and Grafana. It aims to store historical data for visualizing electricity consumption. The project involves detailed architecture, environment setup, Python, FastAPI, Podman, and more usage. The setup covers Raspberry Pi, Podman Compose, and IBM Cloud Code Engine environments, with prerequisites and detailed configurations. The approach allows users to monitor and visualize power consumption efficiently and cost-effectively using Grafana.

How to create a FastAPI server to use OpenAI models

Last time, I wrote a blog post about "IBM Watsonx.ai and a simple question-answering pipeline using Python and FastAPI", and I had an exchange with my family about an OpenAI sample for a FastAPI application, so I created a small FastAPI server to access OpenAI with Python.

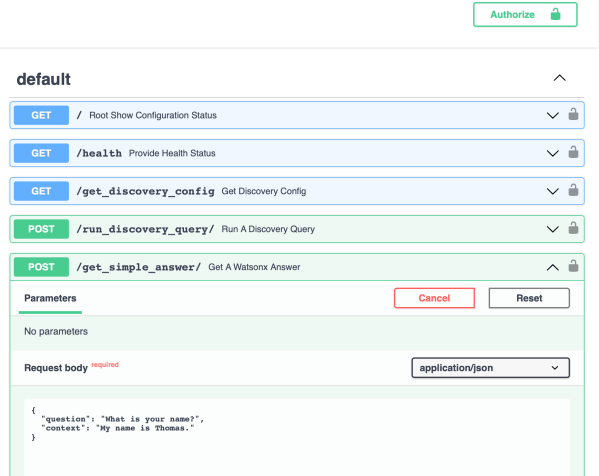

Write a simple question-answering pipeline with IBM watsonx.ai, IBM Watson Discovery by using Python and FastAPI

This blog post contains information about a simple example implementation for a simple question-answering pipeline using an inside-search (IBM Cloud Watson Discovery) and a prompt (IBM Watsonx with prompt-lab) to create an answer.

How to set up Caikit and use Hugging Face models examples

This small blog post is about how to set up a demo environment for using Caikit and Hugging Face models on your local machine.