This longer blog post shows how to : … build a model init container with a custom model for Watson NLP for Embed. … upload the model init container to the IBM Cloud container registry. … deploy the model init container and the Watson NLP runtime to an IBM Cloud Kubernetes Cluster. … test Watson NLP runtime with the loaded model using the REST API.

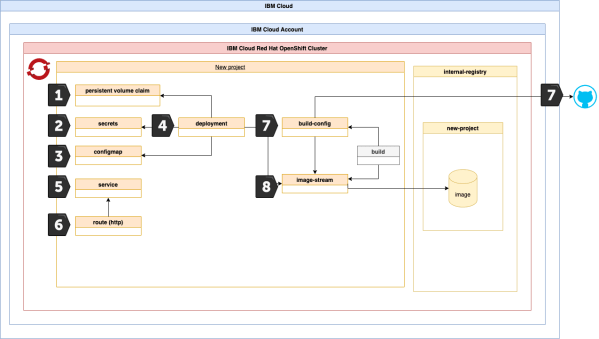

Using the internal OpenShift container registry to deploy an application

This cheat sheet is an extension to a blog post I made which is called: Configure a project in an IBM Cloud Red Hat OpenShift cluster to access the IBM Cloud Container Registry . In that related blog post we used the IBM Cloud Container registry to get the container images to run our example application. Now in this cheat sheet we will use the Red Hat OpenShift internal container registry and the Docker build strategy to deploy the same example application to OpenShift.

How to use environment variables to make a containerized Quarkus application more flexible

When you run a containerized application on a container orchestration platform like Kubernetes, Open Shift or with a serverless framework like Knative or Code Engine or on other platforms, it is helpful to pass endpoints to other applications to the containerized application by using environment variables. When the container will be restarted, these variables can be provided to the container and no adjustment in the source code is necessary. You can use configmaps or in Code Engine simple the environment variable itself.

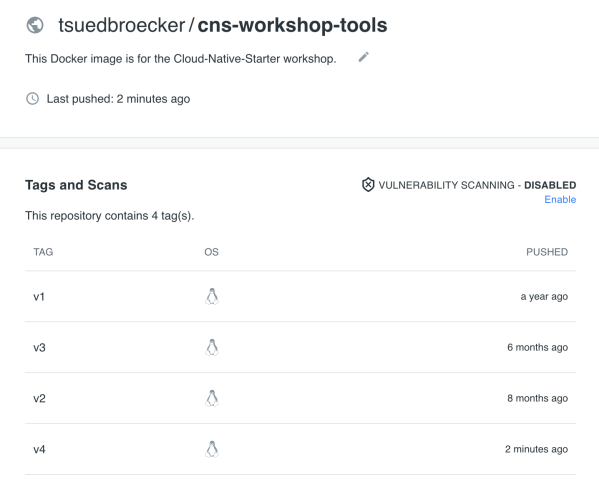

Build a Docker image, push it to Docker hub and clean up local disk space

This blog post does contain the tasks to create a Docker image and upload the image to dockerhub and clean up the image and container on the local machine. Upload Run Push (or save) Clean up Upload the image 1. Create a local Docker image using docker build Dockerhub account name: tsuedbroecker Dockerhub repository name:... Continue Reading →

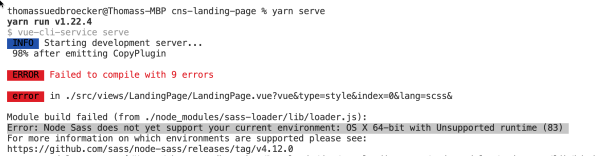

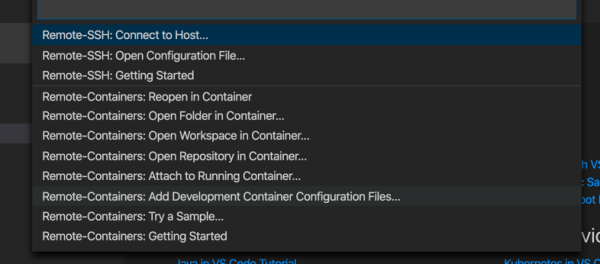

Error: Node Sass does not yet support your current environment: OS X 64-bit with Unsupported runtime (83) … using a remote development container to run the Vue.js application

OS X 64-bit with Unsupported runtime (83) ... In this blog post I want to show, how to setup a remote development container for a Vue.js application, which isn't able to run on my local machine, even after the update of Node.js, npm and yarn.

Run a PostgreSQL container as a non-root user in OpenShift

In this blog post I want to point out a simple topic: How to run a simple PostgreSQL Docker image as a non-productive container in OpenShift? As you maybe know, OpenShift doesn't allow by default to run container images as root. The image below shows the result of the simply deployed postgreSQL image from dockerhub. It's possible... Continue Reading →

Setup a MongoDB in less than 4 min on a free IBM Cloud Kubernetes cluster at a Hackathon

In this blog post I want to highlight that I just created a GitHub project and a 10 min YouTube video to "How to setup mongoDB in less than 4 min on a free IBM Cloud Kubernetes cluster at a Hackathon". My objective is to provide a small guide, how to setup a MongoDB server... Continue Reading →

(Outdated) Run a Docker image as a Cloud Foundry App on IBM Cloud

In that blog post I want to point out an awesome topic: "Run a Docker container image as a Cloud Foundry App on IBM Cloud" Rainer Hochecker, Simon Moser and I had an interesting exchange about running a Docker image as a Cloud Foundry App on IBM Cloud. The advantage with that approach is: you... Continue Reading →

Run a MicroProfile Microservice on OpenLiberty in a Remote development container in Visual Studio Code

In this blog post I want to show, how to setup a local remote Java development container for Eclipse MicroProfile with OpenLiberty in Visual Studio Code. I did that for the Authors microservice from the Cloud Native Starter project with MicroProfile 3.2, OpenJDK Java 11, and the latest OpenLiberty version. That blog post is structured... Continue Reading →

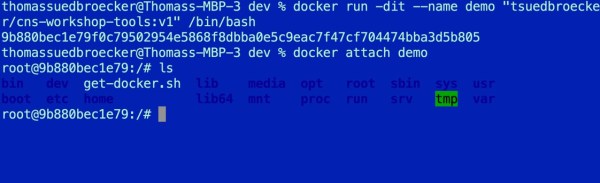

Docker container in detached and attached mode

This is a very short blog post about the usage of a Docker container in detached and attached mode. Some times participants in workshops want to reconnect to a docker container, because they closed their terminal session with the container which was in an interactive mode and they want to reconnect to their exiting container... Continue Reading →