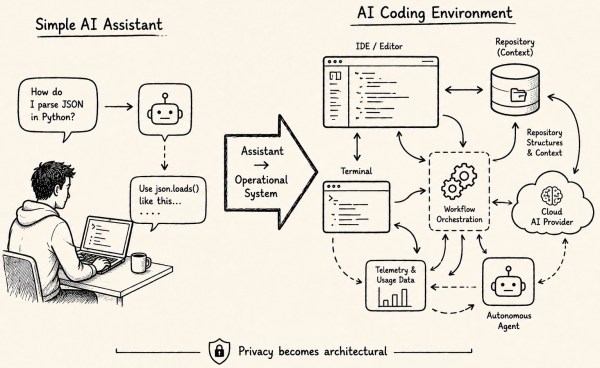

This post compares various AI coding assistants/agents, emphasizing privacy from a developer's perspective. It highlights how modern AI systems function as integral tools in software development, shifting the privacy discourse from mere data training concerns to broader issues like data sovereignty, operational exposure, and pricing implications, particularly for individual developers.

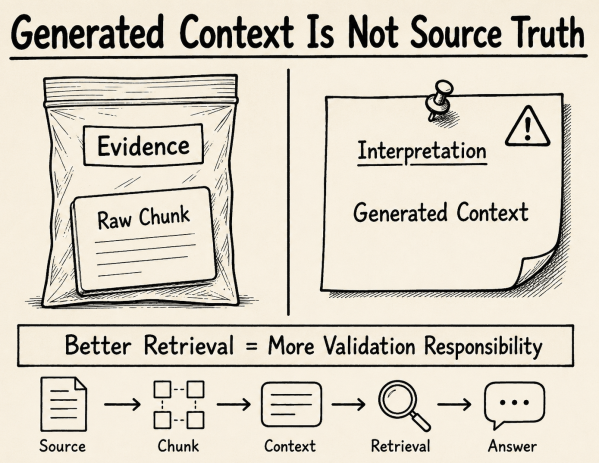

Contextual Retrieval with Milvus: Better Retrieval, More Validation Responsibility

This post reflects on Contextual Retrieval with Milvus in RAG systems. It explains how generated context can improve chunk retrieval, but also changes the retrieval corpus. Once generated context is indexed, validation, traceability, and quality control become architectural responsibilities—not optional implementation details.

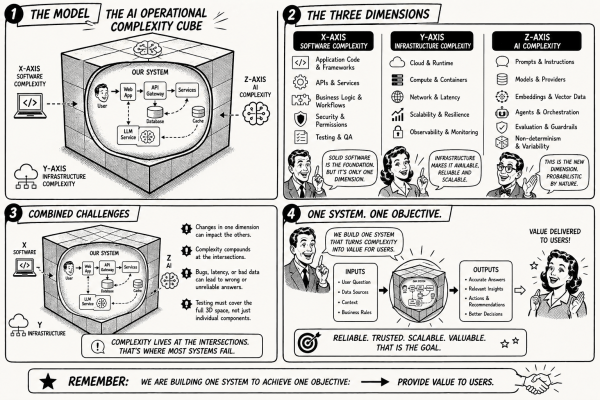

Revisiting the AI Operational Complexity Cube: From LLM Testing to AI Systems in Production

The article continues the exploration of the AI Operational Complexity Cube, emphasizing that modern AI systems encompass software, infrastructure, and probabilistic AI components. It highlights the need for comprehensive testing approaches that consider interactions across these dimensions, as proper evaluation requires observing behaviors that emerge from integrated systems rather than isolated code.

AI Conference 2026 — When Observations Become Confirmation About Real AI Systems

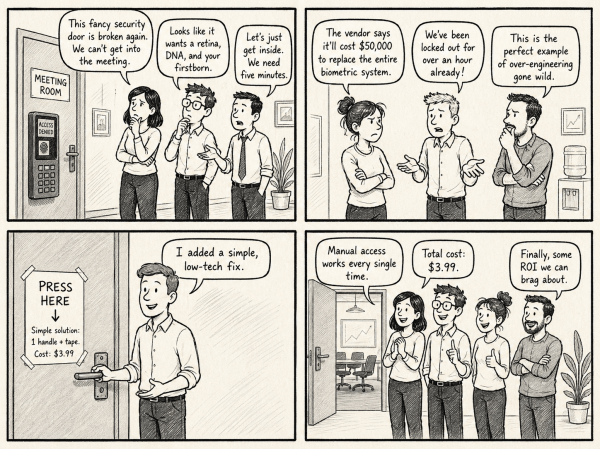

The AI Conference 2026 highlighted the evolution of AI discussions from theoretical capabilities to operational realities. Key themes included the importance of data quality, operational complexity, and risk management. Attendees noted a shift in software development roles towards reviewing AI outputs, emphasizing the need for understanding real problems rather than solely implementing complex solutions.

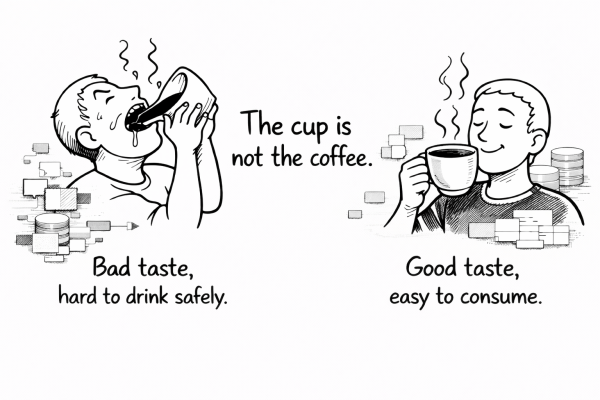

The Cup Is Not the Coffee: What Data Quality Means in the AI Era

AI systems rely heavily on data quality, which is often overlooked despite modern technical architectures. Issues like outdated, incomplete, or misaligned data can undermine system reliability, regardless of the sophistication of the components. Effective AI requires both high-quality data and solid technical infrastructure to meet user expectations and ensure trust.

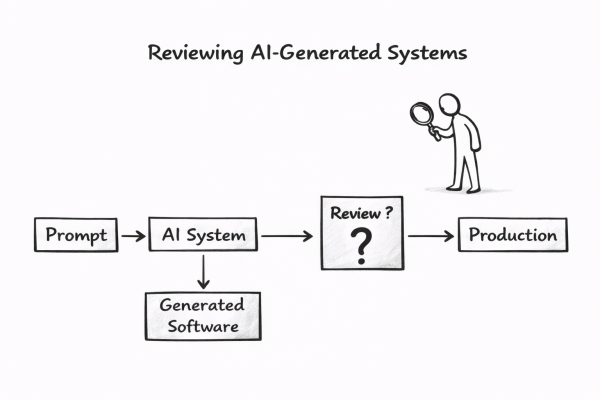

Who Reviews AI-Generated Software?

AI is transforming the software development lifecycle, shifting focus from coding to reviewing AI-generated systems. While AI tools simplify software generation, building trustworthy systems remains complex. Traditional review processes may no longer suffice. This raises a critical question: how can humans responsibly.

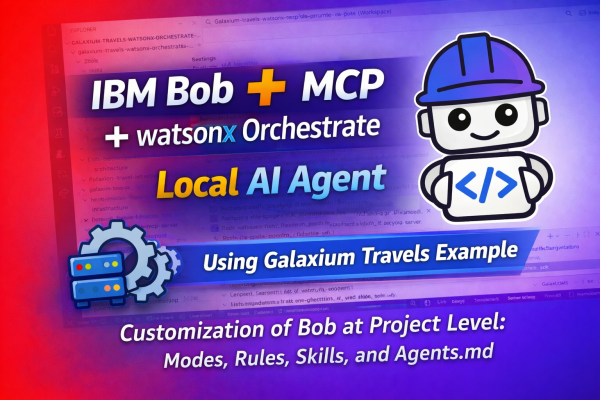

Using IBM Bob, MCP, and watsonx Orchestrate to Generate an Agent

This post discusses a local setup utilizing IBM Bob to generate an agent for watsonx Orchestrate, specifically with tools from the Galaxium Travels MCP server. It explains the architecture, customization of Bob, and integration with various components, providing both learning and practical implementation value for developers.

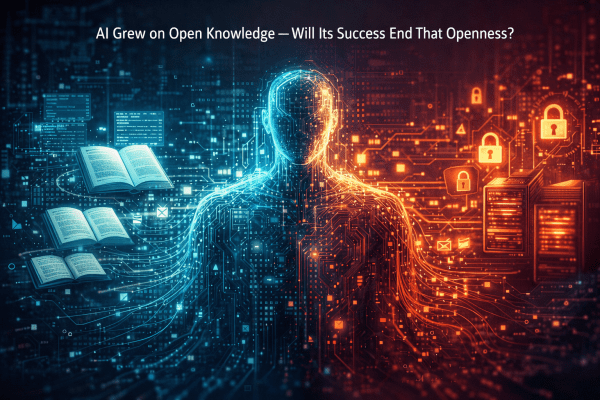

AI Grew on Open Knowledge — Will Its Success End That Openness?

This blog post explores the paradox of AI's growth potential versus the increasing trend toward data protectionism. It highlights how AI tools are hindered by data access limitations, posing risks to innovation. The observation implies that as data becomes more valuable, organizations may withhold it, undermining the openness that has historically fueled AI development.

Should MCP Replace REST for AI-Ready Applications?

The article explores the potential for using the Model Context Protocol (MCP) as a primary backend interface instead of traditional REST APIs in AI-enabled applications. Through the Galaxium Travels experiment, it examines the advantages and disadvantages of an MCP-first architecture, advocating for its use to reduce duplication and complexity while acknowledging REST's established role in many ecosystems.

Building a Reproducible AI-Generated Project with ChatGPT, Codex, and Docling in VS Code

A structured experiment using ChatGPT and Codex in VS Code to generate a reproducible open-source Docling preprocessing pipeline with strict engineering constraints.