This post is a continuation of my earlier article, “Exploring the AI Operational Complexity Cube idea for Testing Applications integrating LLMs”.

In the earlier post, I introduced the cube mainly from the perspective of testing LLM-based applications. In this follow-up, I want to make the model clearer and easier to use as a practical mental model for AI systems in production.

The main idea remains the same:

AI systems are not only software systems.

They are software systems, infrastructure systems, and probabilistic AI systems at the same time.

The cube helps illustrate how modern AI systems combine multiple independent layers of operational complexity.

- From Classical Systems to AI Systems

- The AI Operational Complexity Cube

- Deterministic vs Non-Deterministic Complexity

- Deterministic complexity

- Non-Deterministic complexity

- Testing LLM-based Applications

- Example: AI Agent System

- Why This Model Helps

- What Changed Compared to the Original Cube Idea

- Summary

- References and additional resources

1. From Classical Systems to AI Systems

Traditional software systems are mostly deterministic:

| Classical Software Systems | AI-driven Systems |

|---|---|

| Deterministic behaviour | Probabilistic responses |

| Code defines behaviour | Model influences behaviour |

| Unit and integration tests | Evaluation, prompt testing, and behavioural testing |

| APIs and services | Agents and model orchestration |

| Fixed logic | Adaptive responses |

This shift changes how we approach testing, validation, and system reliability.

When integrating LLMs into applications, we are no longer testing only code—we are testing system behaviour emerging from multiple interacting components.

2. The AI Operational Complexity Cube

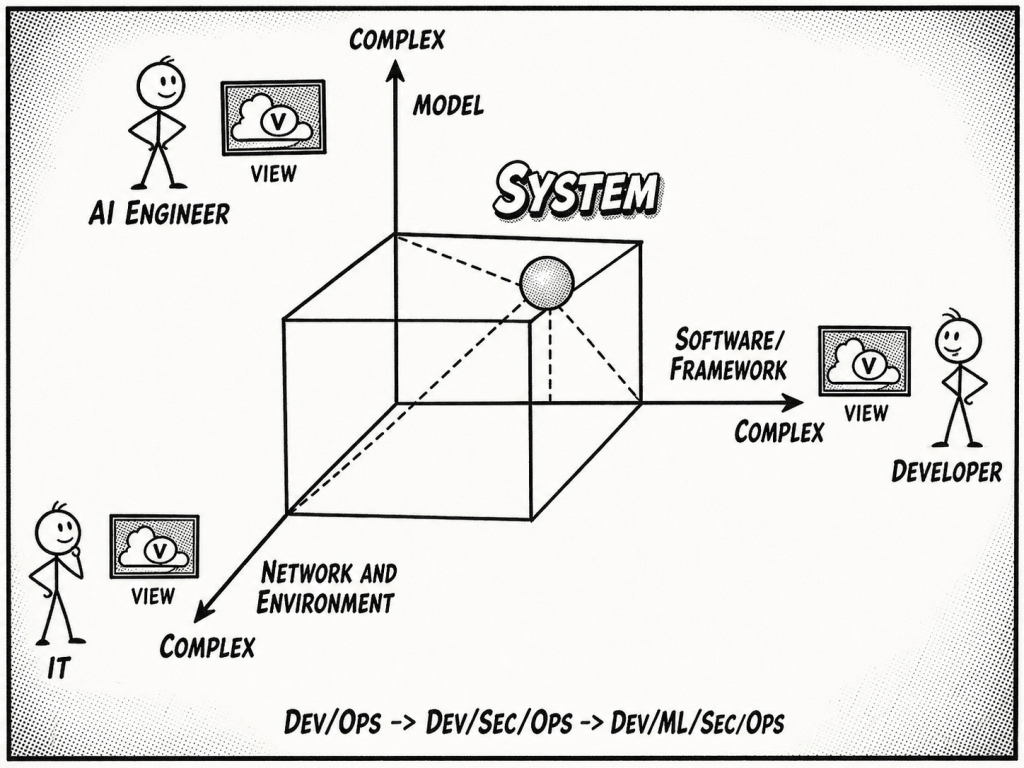

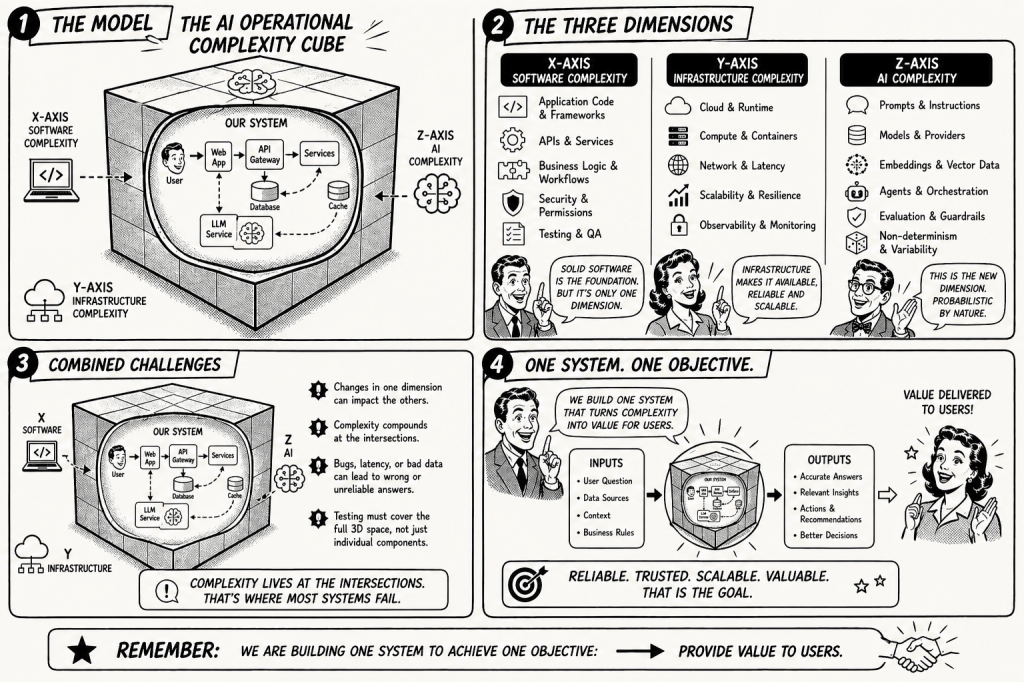

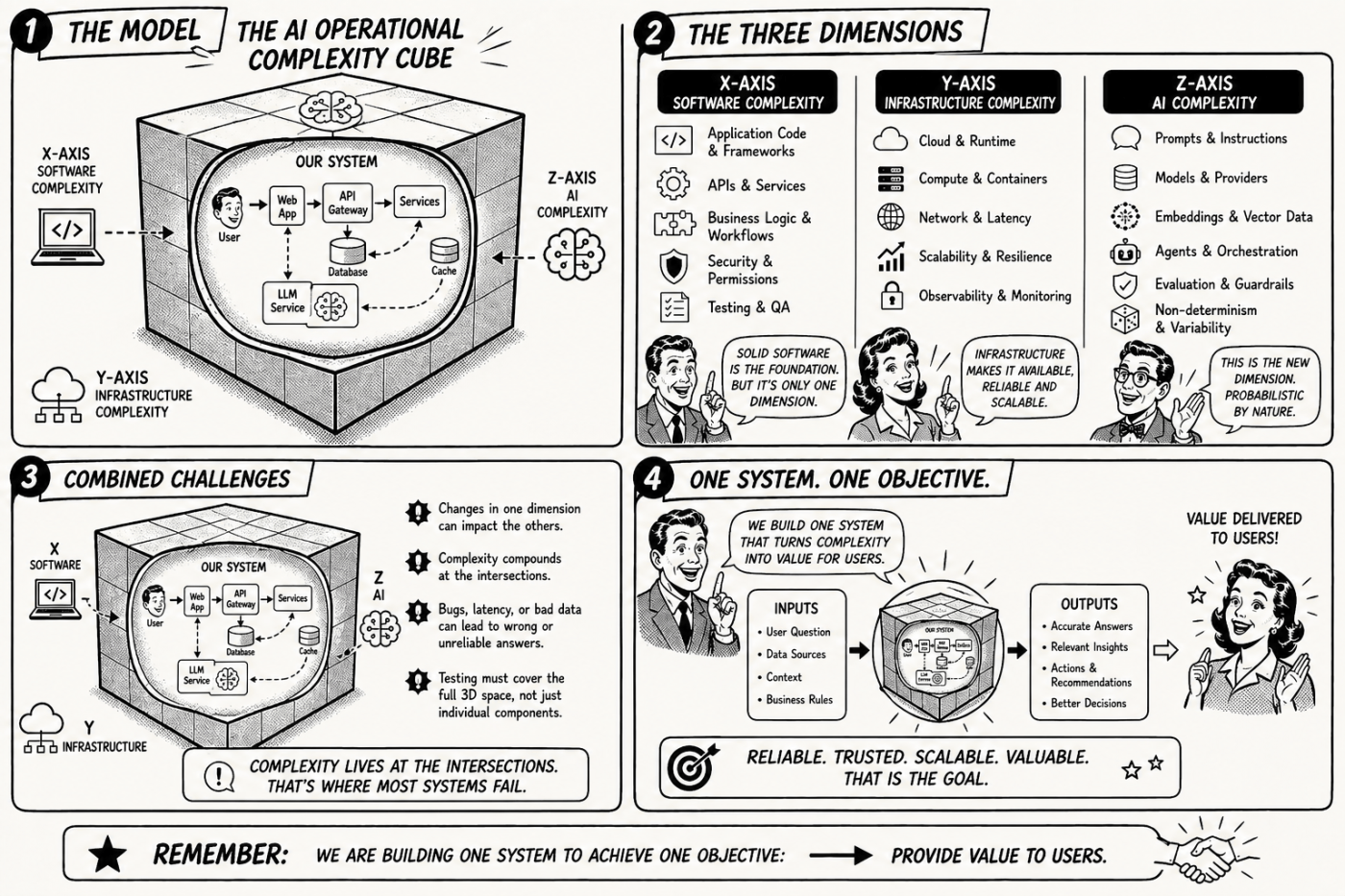

To better understand these challenges, I started visualizing the operational complexity of AI systems as a cube with three dimensions.

Each axis represents a different category of complexity.

| Dimension | Description |

|---|---|

| Software Complexity | Application logic, frameworks, APIs, orchestration code |

| Infrastructure Complexity | Cloud runtime, containers, networking, scaling |

| AI Complexity | Prompts, models, embeddings, agents, guardrails, evaluation |

These dimensions exist independently but interact strongly with each other.

For example:

- A prompt change may influence model output.

- Infrastructure latency may influence agent behaviour.

- Software orchestration may amplify model errors.

The cube helps illustrate that modern AI systems operate across all three dimensions simultaneously.

3. Deterministic vs. Non-Deterministic Complexity

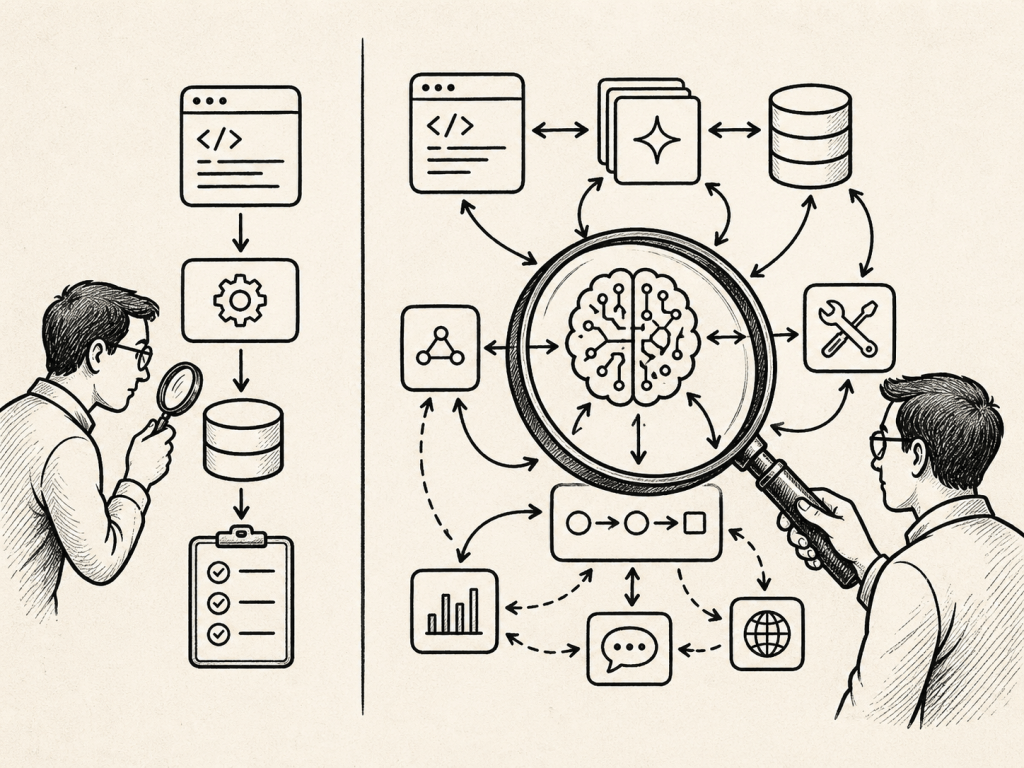

One of the key insights when working with AI systems is the difference between deterministic and non-deterministic complexity.

Classical testing checks defined components. AI system evaluation must observe behaviour across software, infrastructure, data, tools, and models.

3.1 Deterministic complexity

Traditional software systems behave predictably.

Examples:

- APIs return defined responses

- Databases return consistent queries

- Infrastructure failures are observable

Testing strategies include:

- unit testing

- integration testing

- load testing

- fault injection

3.2 Non-Deterministic complexity

AI systems introduce probabilistic behaviour.

Examples include:

- different responses to the same prompt

- hallucinated information

- prompt sensitivity

- tool selection variations in agent frameworks

This requires new testing approaches, including:

- prompt evaluation

- model benchmarking

- guardrails and safety validation

- human-in-the-loop review and evaluation

4. Testing LLM-based Applications

Testing applications that integrate LLMs means testing across all three cube dimensions.

| Cube Dimension | Example Testing Strategy |

|---|---|

| Software | integration tests, API testing |

| Infrastructure | latency testing, scaling tests |

| AI | prompt evaluation, hallucination and factuality checks |

The complexity increases significantly when using agent frameworks, because the system may:

- call tools

- query databases

- reason across multiple steps

- dynamically generate prompts

In these cases, testing must focus on system behaviour rather than only component correctness.

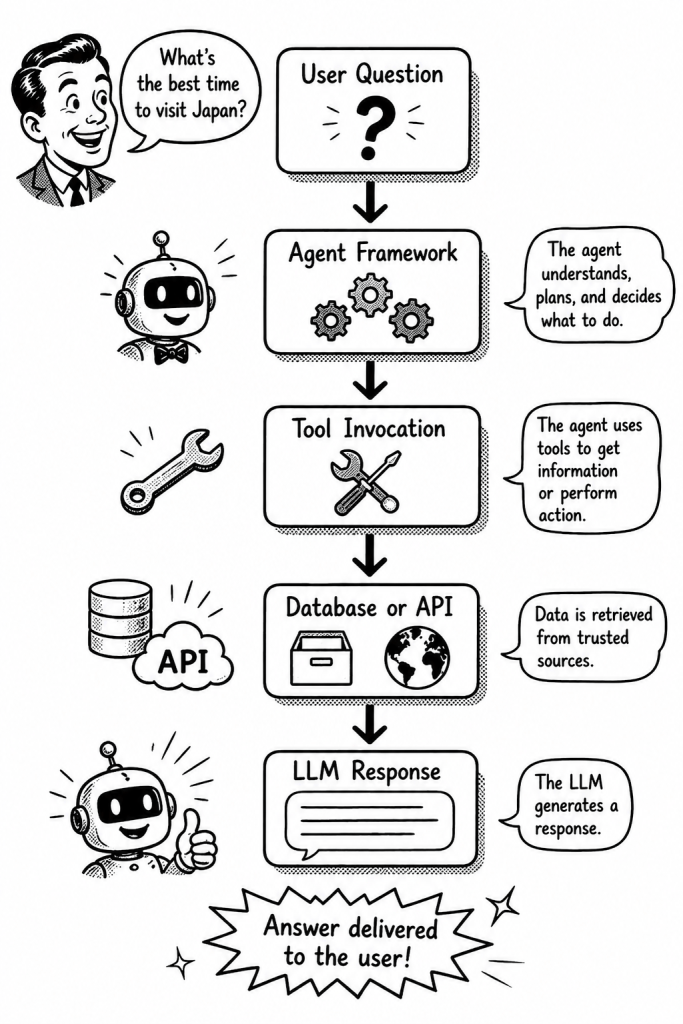

4.1 Example: AI Agent System

Consider a simplified architecture of an AI agent system.

Testing such a system requires evaluating:

- prompt variations

- tool selection behaviour

- API reliability

- model hallucination risk

- response consistency

Each of these aspects belongs to a different dimension of the AI Operational Complexity Cube.

5. Why This Model Helps

The cube is not a strict framework. It is simply a mental model that helps engineers reason about system complexity.

Instead of looking at testing from a single perspective, we can evaluate systems across three dimensions:

- software behaviour

- operational infrastructure

- AI model dynamics

This helps teams design more robust testing strategies for AI-driven systems.

6. What Changed Compared to the Original Cube Idea

In the original post, I mainly used the cube to think about testing LLM-based applications.

After working more with AI agents, tool integration, evaluation frameworks, and production-oriented AI systems, I would now describe the cube more explicitly as an operational model.

The important shift is this:

We are not only testing prompts.

We are testing complete AI-enabled systems.

That means we need to observe and evaluate:

- software behaviour

- infrastructure behaviour

- model behaviour

- tool usage

- data quality

- orchestration logic

- human responsibility

This is where the cube becomes useful.

It reminds us that problems in AI systems rarely belong to only one layer. A bad answer may come from the model, but it may also come from wrong data, poor orchestration, latency, missing guardrails, or unclear ownership.

A bad AI answer is not always a model problem. It can emerge from wrong data, poor orchestration, latency, missing guardrails, unclear ownership, or model behaviour.

7. Summary

The AI Operational Complexity Cube helps to show that modern AI systems combine three different types of complexity:

- software complexity

- infrastructure complexity

- AI complexity

Classical testing is still necessary, but it is no longer sufficient.

For AI-enabled systems, we also need evaluation, observability, guardrails, data quality checks, and human responsibility.

The key point is simple:

- We are no longer only testing code.

- We are testing the behaviour of complete AI systems.

That behaviour emerges from software, infrastructure, data, models, tools, and people working together.

This is why AI systems need engineering discipline, not only better models.

8. References and additional resources

This article extends my earlier post about the AI Operational Complexity Cube and LLM application testing. The following references helped me connect the idea with AI risk management, site reliability engineering, and current research on LLM testing and evaluation.

- NIST: Artificial Intelligence Risk Management Framework: Generative AI Profile

https://www.nist.gov/publications/artificial-intelligence-risk-management-framework-generative-artificial-intelligence - NIST: AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework - Google SRE Book: Monitoring Distributed Systems

https://sre.google/sre-book/monitoring-distributed-systems/ - Google SRE Book: Table of Contents

https://sre.google/sre-book/table-of-contents/ - Challenges in Testing Large Language Model Based Applications

https://arxiv.org/html/2503.00481v1 - Non-Determinism of “Deterministic” LLM Settings

https://arxiv.org/html/2408.04667v4 - Testing and Evaluation of Large Language Models: Correctness, Non-Toxicity, and Fairness

https://arxiv.org/abs/2409.00551 - Testing AIware Systems: A Software Engineering Survey

https://openreview.net/attachment?id=09wqHDxwbG&name=pdf

Note: This post reflects my own ideas and experience; AI was used only as a writing and thinking aid to help structure and clarify the arguments, not to define them.

#ai, #llm, #aiengineering, #aiops, #mlops, #devops, #devsecops, #softwareengineering, #llmtesting, #aitesting, #agenticai, #aisystems, #aiinproduction, #observability, #riskmanagement, #guardrails, #dataquality, #systemdesign, #cloudnative, #generativeai

Leave a comment