In that blog post we will add a webhook to our existing operator project Multi Tenancy Frontend Operator in the branch update-operator were we created the v2alpha2 API version for the operator in the last blog post “Add a new API version to an existing operator“. The final implementation for the current blog post you find in the webhook-gen-operator branch. (details about conversion webhook)

Yes, that is a very long blog post, because I wanted to include mostly all changes which were made during the development. These blog posts are very useful in that context webhook with operator made by Srutha K and from Niklas Heidloff

Configuring Webhooks for Kubernetes Operators.

Our starting point is that our Custom Resource Definition contains two API versions:

At the moment we use the v2alpha2 API to create a frontend web-application with our operator.

We will add the v1beta1 API version to our example.

Maybe you wondering why creating a v1beta1 API version?

Here is an example what happens when we try to create a bundle later to run the operator with a webhook trying only to convert from v1alpha1 and a v2alpha2, we are simply not allowed bundle it because of conversionReviewVersions restrictions.

We will get following error message:

ERRO[0000] Error: Field spec.conversion.conversionReviewVersions, Value [v1alpha1 v2alpha2]: spec.conversion.conversionReviewVersions: Invalid value: []string{"v1alpha1", "v2alpha2"}: must include at least one of v1, v1beta1

It seems that based on the API versioning naming guidelines in the Kubernetes documentation we need to add a

v1beta1API version to our example to be able to bundle the conversion webhook.

- Alpha definition:

- The version names contain

alpha(for example,v1alpha1). - The software may contain bugs. Enabling a feature may expose bugs. A feature may be disabled by default.

- The support for a feature may be dropped at any time without notice.

- The API may change in incompatible ways in a later software release without notice.

- The software is recommended for use only in short-lived testing clusters, due to increased risk of bugs and lack of long-term support.

- The version names contain

We will only change in that new required v1beta1v1alpha1 custom resource objects in the controller implementation.

Objective

By using a webhook we want to achieve that our operator converts the

v1alpha1API specification to av1beta1API specification automatically before the custom resource object is created inside the Kubernetes cluster.

For example, when we deploy a custom resource object with the API version v1alpha1 using kubectl apply to the cluster that API version will be transformed to a v1beta1 API and saved in the etcd of our Kubernetes cluster.

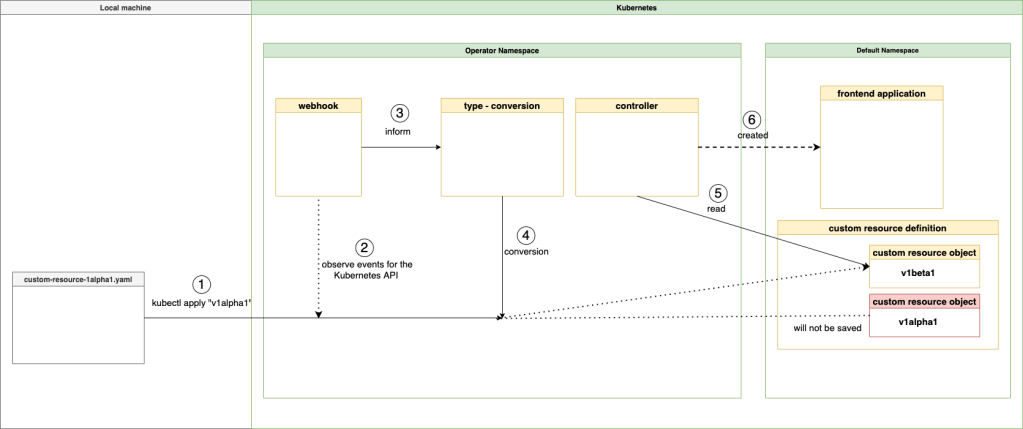

Simplified overview

With a webhook we will be informed about events before they are reaching the Kubernetes API server. Let us take a look at following simplified diagram to visualize some dependencies and get a basic understanding what will happen.

Note: It doesn’t contain the information about tls certificate. (details Operator SDK documentation).

- We deploy custom resource object using the

v1alphaversion usingkubectl apply - The

conversion webhookdoes get that event before the event reaches the Kubernetes API server - The

conversion webhookinforms the that the APIv1alphaversion needs to be converted - The API version will be converted from

v1alpha1tov1beta1andv1beta1saved - The controller of the operator will now read the

v1beta1of the custom resource object - The controller will create the frontend application using the

v1beta1API of the operator

In other words the version v1beta1 will always be created, but the v1alpha1 will be accepted for the creation.

Here is a gif with that 6 steps:

The Sequence we will follow

That is sequence we will follow to get the example operator running.

- Clone the project

- Add a

v1beta1API version - Add a

webhookand aconversionto the operator - Implement the conversion for the existing APIs

- Configure some files to use the

webhookand aconversion - Create and push a controller-manager image to a container registry

- Create and push a bundle image to a container registry

- Create and push a new catalog image to a container registry

- Create a

Catalog sourceand aSubscriptionspecification

1. Clone the example project Multi Tenancy Frontend Operator

- Clone

git clone https://github.com/thomassuedbroecker/multi-tenancy-frontend-operator.git

- Navigate to the folder

cd multi-tenancy-frontend-operator/frontendOperator

- Checkout the

update-operatorbranch

git checkout update-operator

2. Add a v1beta1 API version

That works as we did in the last blog post.

Step 1: Create the new v1beta1 API

operator-sdk create api --group multitenancy --version v1beta1 --kind TenancyFrontend --resource

Step 2: Copy the type definition

Copy TenancyFrontendSpec from api.v2alpha2.tenancyfrontend_types.go to api.v2beta2.tenancyfrontend_types.go.

That is what you should find and copy:

type TenancyFrontendSpec struct {

// INSERT ADDITIONAL SPEC FIELDS - desired state of cluster

// Important: Run "make" to regenerate code after modifying this file

// Size is an example field of TenancyFrontend. Edit tenancyfrontend_types.go to remove/update

// +kubebuilder:validation:Required

// +kubebuilder:validation:Minimum=0

Size int32 `json:"size"`

// +kubebuilder:validation:Required

// +kubebuilder:validation:MaxLength=15

DisplayName string `json:"displayname,omitempty"`

// +kubebuilder:validation:MaxLength=15

// +kubebuilder:default:=Movies

CatalogName string `json:"catalogname,omitempty"`

}

Step 3: Change the storageversion

- Remove

// +kubebuilder:storageversionfromv2alpha2 - Add

// +kubebuilder:storageversiontov1beta1

//+kubebuilder:object:root=true

//+kubebuilder:subresource:status

//+kubebuilder:storageversion

// TenancyFrontend is the Schema for the tenancyfrontends API

type TenancyFrontend struct {

metav1.TypeMeta `json:",inline"`

metav1.ObjectMeta `json:"metadata,omitempty"`

Spec TenancyFrontendSpec `json:"spec,omitempty"`

Status TenancyFrontendStatus `json:"status,omitempty"`

}

Step 4: Update the example custom resource object creation yaml

- Update

config.samples.multitenancy_v1beta1.tenancyfrontend.yamlwith following code

apiVersion: multitenancy.example.net/v1beta1

kind: TenancyFrontend

metadata:

name: tenancyfrontend-sample

spec:

# TODO(user): Add fields here

size: 1

displayname: "Movie-Store"

catalogname: Movies

Step 5: To apply the changes we run make generate and make manifests¶

make generate

make manifests

That adds to the CustomResourceDefinition file config.crd.bases.multitenancy.example.net_tenancyfrontends.yaml the newly created API.

Step 6: Update the controller implementation¶

In the older blog post we still examine the older API v1alpha1 now we comment that out in the controller implementation and that will remove the import for v1alpha1 API version.

// "Verify if a CRD of TenancyFrontend exists"

/*

logger.Info("Verify if a CRD of TenancyFrontend exists")

tenancyfrontend_old := &multitenancyv1alpha1.TenancyFrontend{}

err := r.Get(ctx, req.NamespacedName, tenancyfrontend_old)

if err != nil {

if errors.IsNotFound(err) {

logger.Info("TenancyFrontend v1alpha1 resource not found.")

}

// Error reading the object - requeue the request.

logger.Info("Failed to get TenancyFrontend v1alpha1")

} else {

logger.Info("Got an old TenancyFrontend v1alpha1, object this will not be used!")

}

*/

tenancyfrontend := &multitenancyv2alpha2.TenancyFrontend{}

err := r.Get(ctx, req.NamespacedName, tenancyfrontend)

Step 7: Update the controller implementation to use the new v1beta1 API and replace v2alpha2

3. Add a webhook and a conversion to the operator

Therefor we use the parameters webhook and a conversion for details you can visit the Operator SDK documentation.

Step 1: Invoke the operator-sdk

These are the used parameters:

--conversionif set, scaffold the conversion webhook--defaultingif set, scaffold the defaulting webhook--programmatic-validationif set, scaffold the validating webhook

operator-sdk create webhook --group multitenancy --version v1beta1 --kind TenancyFrontend --conversion --defaulting --programmatic-validation

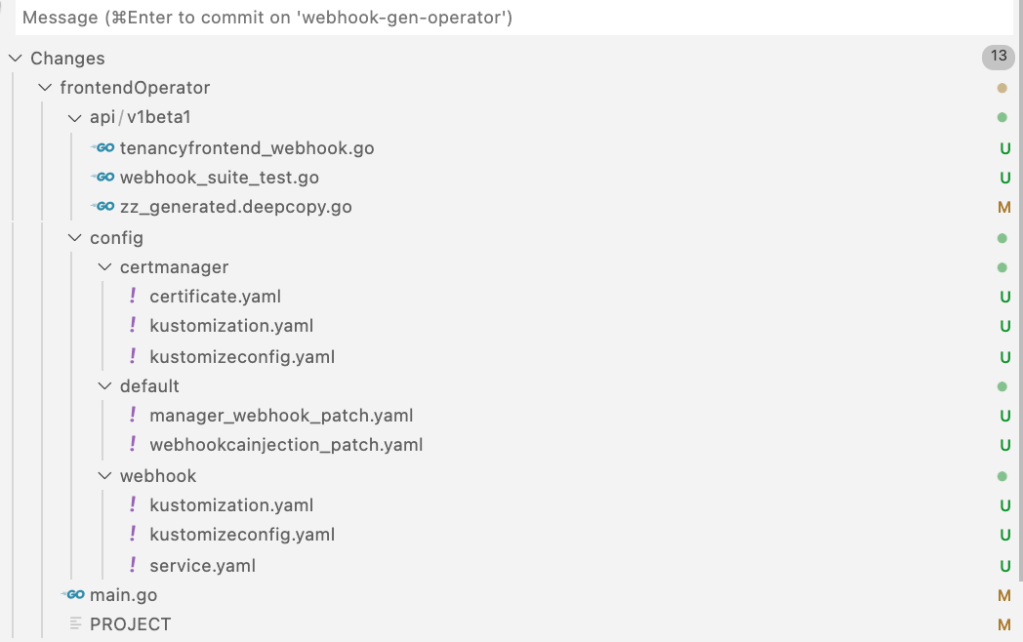

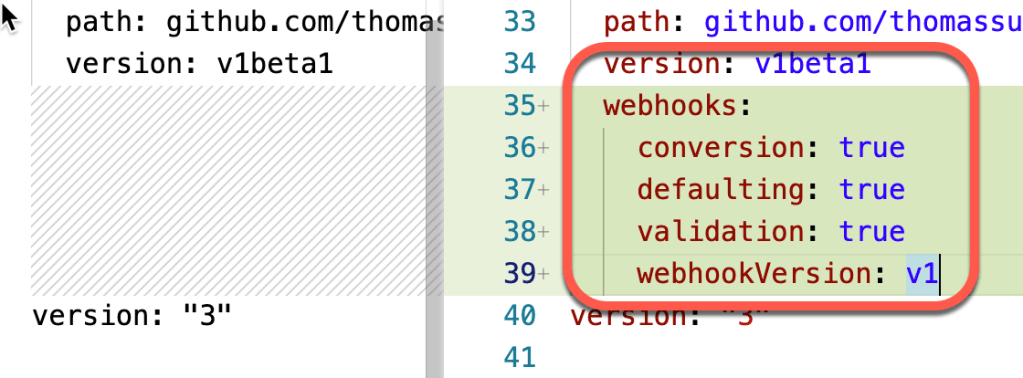

As we see in the git changes there are 11 files effected by this creation:

Step 2: Let’s have a closer look at the changes

The following information contains mostly all information from the different files in detail. It adds webhook and cert-manager related configurations to the operator project.

IMPORTANT: To run the operator locally we need to take a look in the Operator SDK documentation, which points also the kubebuilder book related to the needed tls certificate. That reason why we will not run the operator with webhook locally.

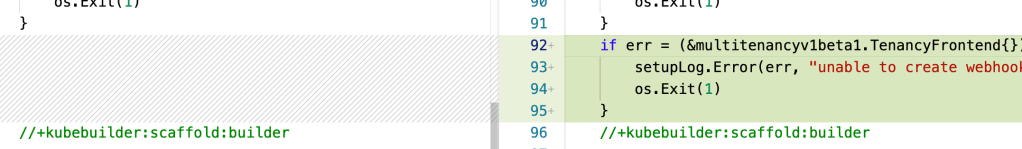

- Modified

frontendOperator.main.go

- Modified

frontendOperator.PROJECT

The webhook in for the conversion in version 1 was added.

package v1beta1

import (

"k8s.io/apimachinery/pkg/runtime"

ctrl "sigs.k8s.io/controller-runtime"

logf "sigs.k8s.io/controller-runtime/pkg/log"

"sigs.k8s.io/controller-runtime/pkg/webhook"

)

// log is for logging in this package.

var tenancyfrontendlog = logf.Log.WithName("tenancyfrontend-resource")

func (r *TenancyFrontend) SetupWebhookWithManager(mgr ctrl.Manager) error {

return ctrl.NewWebhookManagedBy(mgr).

For(r).

Complete()

}

// TODO(user): EDIT THIS FILE! THIS IS SCAFFOLDING FOR YOU TO OWN!

//+kubebuilder:webhook:path=/mutate-multitenancy-example-net-v1beta1-tenancyfrontend,mutating=true,failurePolicy=fail,sideEffects=None,groups=multitenancy.example.net,resources=tenancyfrontends,verbs=create;update,versions=v1beta1,name=mtenancyfrontend.kb.io,admissionReviewVersions=v1

var _ webhook.Defaulter = &TenancyFrontend{}

// Default implements webhook.Defaulter so a webhook will be registered for the type

func (r *TenancyFrontend) Default() {

tenancyfrontendlog.Info("default", "name", r.Name)

// TODO(user): fill in your defaulting logic.

}

// TODO(user): change verbs to "verbs=create;update;delete" if you want to enable deletion validation.

//+kubebuilder:webhook:path=/validate-multitenancy-example-net-v1beta1-tenancyfrontend,mutating=false,failurePolicy=fail,sideEffects=None,groups=multitenancy.example.net,resources=tenancyfrontends,verbs=create;update,versions=v1beta1,name=vtenancyfrontend.kb.io,admissionReviewVersions=v1

var _ webhook.Validator = &TenancyFrontend{}

// ValidateCreate implements webhook.Validator so a webhook will be registered for the type

func (r *TenancyFrontend) ValidateCreate() error {

tenancyfrontendlog.Info("validate create", "name", r.Name)

// TODO(user): fill in your validation logic upon object creation.

return nil

}

// ValidateUpdate implements webhook.Validator so a webhook will be registered for the type

func (r *TenancyFrontend) ValidateUpdate(old runtime.Object) error {

tenancyfrontendlog.Info("validate update", "name", r.Name)

// TODO(user): fill in your validation logic upon object update.

return nil

}

// ValidateDelete implements webhook.Validator so a webhook will be registered for the type

func (r *TenancyFrontend) ValidateDelete() error {

tenancyfrontendlog.Info("validate delete", "name", r.Name)

// TODO(user): fill in your validation logic upon object deletion.

return nil

}

package v1beta1

import (

"context"

"crypto/tls"

"fmt"

"net"

"path/filepath"

"testing"

"time"

. "github.com/onsi/ginkgo"

. "github.com/onsi/gomega"

admissionv1beta1 "k8s.io/api/admission/v1beta1"

//+kubebuilder:scaffold:imports

"k8s.io/apimachinery/pkg/runtime"

"k8s.io/client-go/rest"

ctrl "sigs.k8s.io/controller-runtime"

"sigs.k8s.io/controller-runtime/pkg/client"

"sigs.k8s.io/controller-runtime/pkg/envtest"

"sigs.k8s.io/controller-runtime/pkg/envtest/printer"

logf "sigs.k8s.io/controller-runtime/pkg/log"

"sigs.k8s.io/controller-runtime/pkg/log/zap"

)

// These tests use Ginkgo (BDD-style Go testing framework). Refer to

// http://onsi.github.io/ginkgo/ to learn more about Ginkgo.

var cfg *rest.Config

var k8sClient client.Client

var testEnv *envtest.Environment

var ctx context.Context

var cancel context.CancelFunc

func TestAPIs(t *testing.T) {

RegisterFailHandler(Fail)

RunSpecsWithDefaultAndCustomReporters(t,

"Webhook Suite",

[]Reporter{printer.NewlineReporter{}})

}

var _ = BeforeSuite(func() {

logf.SetLogger(zap.New(zap.WriteTo(GinkgoWriter), zap.UseDevMode(true)))

ctx, cancel = context.WithCancel(context.TODO())

By("bootstrapping test environment")

testEnv = &envtest.Environment{

CRDDirectoryPaths: []string{filepath.Join("..", "..", "config", "crd", "bases")},

ErrorIfCRDPathMissing: false,

WebhookInstallOptions: envtest.WebhookInstallOptions{

Paths: []string{filepath.Join("..", "..", "config", "webhook")},

},

}

cfg, err := testEnv.Start()

Expect(err).NotTo(HaveOccurred())

Expect(cfg).NotTo(BeNil())

scheme := runtime.NewScheme()

err = AddToScheme(scheme)

Expect(err).NotTo(HaveOccurred())

err = admissionv1beta1.AddToScheme(scheme)

Expect(err).NotTo(HaveOccurred())

//+kubebuilder:scaffold:scheme

k8sClient, err = client.New(cfg, client.Options{Scheme: scheme})

Expect(err).NotTo(HaveOccurred())

Expect(k8sClient).NotTo(BeNil())

// start webhook server using Manager

webhookInstallOptions := &testEnv.WebhookInstallOptions

mgr, err := ctrl.NewManager(cfg, ctrl.Options{

Scheme: scheme,

Host: webhookInstallOptions.LocalServingHost,

Port: webhookInstallOptions.LocalServingPort,

CertDir: webhookInstallOptions.LocalServingCertDir,

LeaderElection: false,

MetricsBindAddress: "0",

})

Expect(err).NotTo(HaveOccurred())

err = (&TenancyFrontend{}).SetupWebhookWithManager(mgr)

Expect(err).NotTo(HaveOccurred())

//+kubebuilder:scaffold:webhook

go func() {

defer GinkgoRecover()

err = mgr.Start(ctx)

Expect(err).NotTo(HaveOccurred())

}()

// wait for the webhook server to get ready

dialer := &net.Dialer{Timeout: time.Second}

addrPort := fmt.Sprintf("%s:%d", webhookInstallOptions.LocalServingHost, webhookInstallOptions.LocalServingPort)

Eventually(func() error {

conn, err := tls.DialWithDialer(dialer, "tcp", addrPort, &tls.Config{InsecureSkipVerify: true})

if err != nil {

return err

}

conn.Close()

return nil

}).Should(Succeed())

}, 60)

var _ = AfterSuite(func() {

cancel()

By("tearing down the test environment")

err := testEnv.Stop()

Expect(err).NotTo(HaveOccurred())

})

The following manifests contain a self-signed issuer CR and a certificate CR.

apiVersion: cert-manager.io/v1

kind: Issuer

metadata:

name: selfsigned-issuer

namespace: system

spec:

selfSigned: {}

---

apiVersion: cert-manager.io/v1

kind: Certificate

metadata:

name: serving-cert # this name should match the one appeared in kustomizeconfig.yaml

namespace: system

spec:

# $(SERVICE_NAME) and $(SERVICE_NAMESPACE) will be substituted by kustomize

dnsNames:

- $(SERVICE_NAME).$(SERVICE_NAMESPACE).svc

- $(SERVICE_NAME).$(SERVICE_NAMESPACE).svc.cluster.local

issuerRef:

kind: Issuer

name: selfsigned-issuer

secretName: webhook-server-cert # this secret will not be prefixed, since it's not managed by kustomize

resources:

- certificate.yaml

configurations:

- kustomizeconfig.yaml

This configuration is for teaching kustomize how to update name ref and var substitution .

nameReference:

- kind: Issuer

group: cert-manager.io

fieldSpecs:

- kind: Certificate

group: cert-manager.io

path: spec/issuerRef/name

varReference:

- kind: Certificate

group: cert-manager.io

path: spec/commonName

- kind: Certificate

group: cert-manager.io

path: spec/dnsNames

apiVersion: apps/v1

kind: Deployment

metadata:

name: controller-manager

namespace: system

spec:

template:

spec:

containers:

- name: manager

ports:

- containerPort: 9443

name: webhook-server

protocol: TCP

volumeMounts:

- mountPath: /tmp/k8s-webhook-server/serving-certs

name: cert

readOnly: true

volumes:

- name: cert

secret:

defaultMode: 420

secretName: webhook-server-cert

This patch add annotation to admission webhook config and variables $(CERTIFICATE_NAMESPACE) and $(CERTIFICATE_NAME) will be substituted by kustomize.

apiVersion: admissionregistration.k8s.io/v1

kind: MutatingWebhookConfiguration

metadata:

name: mutating-webhook-configuration

annotations:

cert-manager.io/inject-ca-from: $(CERTIFICATE_NAMESPACE)/$(CERTIFICATE_NAME)

---

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

name: validating-webhook-configuration

annotations:

cert-manager.io/inject-ca-from: $(CERTIFICATE_NAMESPACE)/$(CERTIFICATE_NAME)

resources:

- manifests.yaml

- service.yaml

configurations:

- kustomizeconfig.yaml

The following config is for teaching kustomize where to look at when substituting vars.

nameReference:

- kind: Service

version: v1

fieldSpecs:

- kind: MutatingWebhookConfiguration

group: admissionregistration.k8s.io

path: webhooks/clientConfig/service/name

- kind: ValidatingWebhookConfiguration

group: admissionregistration.k8s.io

path: webhooks/clientConfig/service/name

namespace:

- kind: MutatingWebhookConfiguration

group: admissionregistration.k8s.io

path: webhooks/clientConfig/service/namespace

create: true

- kind: ValidatingWebhookConfiguration

group: admissionregistration.k8s.io

path: webhooks/clientConfig/service/namespace

create: true

varReference:

- path: metadata/annotations

apiVersion: v1

kind: Service

metadata:

name: webhook-service

namespace: system

spec:

ports:

- port: 443

protocol: TCP

targetPort: 9443

selector:

control-plane: controller-manager

4. Implement the conversion for the existing APIs

For now we don’t do anything if we find a v1alpha1 version custom resource object in our current operator implementation, now we’re going to change that.

To implement the API conversion, we can find information on the Internet. These information entries are related to hubs and spokes found in the kubebuilder documentation. The kubenetes-sigs provide a conversion package that we will use to implement the conversion.

Step 1: Convert the custom resource objects

First we concentrate on the conversion of APIs in Custom Resource Definition implementation. Therefore, we need to modify the files where the Custom Resource Definitions are specified in our source code.

These are the two files (hub and spokes):

v1alpha1“spoke“

Here we will implement the “conversions“. One from v1alpha1 to v1beta1 in the ConvertTo function and one in the ConvertFrom function v1beta1 to v1alpha1 in the frontendOperator.api.v1alpha1.tenancyfrontend.go file. I would say upgrade and downgrade of the API version.

v1beta1“hub“

Here we will set the “hub” reference in the frontendOperator.api.v1beta1.tenancyfrontend.go file.

Let’s implement the conversion.

v1beta1 (hub)

func (*TenancyFrontend) Hub() {}

v1alpha1 (spoke)

Now we work in the frontendOperator.api.v1alpha1.tenancyfrontend.go

- Update the import

import (

"github.com/thomassuedbroecker/multi-tenancy-frontend-operator/api/v1beta1"

metav1 "k8s.io/apimachinery/pkg/apis/meta/v1"

"sigs.k8s.io/controller-runtime/pkg/conversion"

)

- Implement the conversion

In the following code you see the conversion and you see that we are not handling the downgrade properly at the moment because we lose the catalogname value when we downgrade to the older version and currently we don’t save this information anywhere. To avoid such situation take a look in the blog post from Niklas Heidloff called Converting Custom Resource Versions in Operators. He covers the topic how to use the annotation kubectl.kubernetes.io/last-applied-configuration .

kubectl.kubernetes.io/last-applied-configuration: |<br>{"apiVersion":"multitenancy.example.net/v1alpha1","kind":"TenancyFrontend","metadata":{"annotations":{},"name":"tenancyfrontendsample","namespace":"default"},"spec":{"displayname":"MyFrontendDisplayname","size":1}}

// ConvertTo converts this v1alpha1 to v1beta1. (upgrade)

func (src *TenancyFrontend) ConvertTo(dstRaw conversion.Hub) error {

dst := dstRaw.(*v1beta1.TenancyFrontend)

dst.ObjectMeta = src.ObjectMeta

// defined in "v1beta1"

// -------------------------------

// kubebuilder:validation:Required

// kubebuilder:validation:MaxLength=15

maxLength := 15

if len(src.Spec.DisplayName) > maxLength {

dst.Spec.DisplayName = src.Spec.DisplayName[:maxLength]

} else {

dst.Spec.DisplayName = src.Spec.DisplayName

}

// defined in "v1beta1"

// -------------------------------

// kubebuilder:validation:Required

// kubebuilder:validation:Minimum=0

if src.Spec.Size < 0 {

dst.Spec.Size = 0

} else {

dst.Spec.Size = src.Spec.Size

}

// defined in "v1beta1"

// -------------------------------

// kubebuilder:validation:MaxLength=15

// kubebuilder:default:=Movies

dst.Spec.CatalogName = "Movies"

return nil

}

// ConvertFrom converts from the Hub version (v1beta1) to (v1alpha1). (downgrade)

func (dst *TenancyFrontend) ConvertFrom(srcRaw conversion.Hub) error {

src := srcRaw.(*v1beta1.TenancyFrontend)

dst.ObjectMeta = src.ObjectMeta

dst.Spec.Size = src.Spec.Size

dst.Spec.DisplayName = src.Spec.DisplayName

return nil

}

Step 2: Use make commands generate and manifests

Let us now use the make commands generate and manifests and see the changes in our code.

make generate

make manifests

We will notice a config.webhook.manifests.yaml was created.

---

apiVersion: admissionregistration.k8s.io/v1

kind: MutatingWebhookConfiguration

metadata:

creationTimestamp: null

name: mutating-webhook-configuration

webhooks:

- admissionReviewVersions:

- v1

clientConfig:

service:

name: webhook-service

namespace: system

path: /mutate-multitenancy-example-net-v1beta1-tenancyfrontend

failurePolicy: Fail

name: mtenancyfrontend.kb.io

rules:

- apiGroups:

- multitenancy.example.net

apiVersions:

- v1beta1

operations:

- CREATE

- UPDATE

resources:

- tenancyfrontends

sideEffects: None

---

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

creationTimestamp: null

name: validating-webhook-configuration

webhooks:

- admissionReviewVersions:

- v1

clientConfig:

service:

name: webhook-service

namespace: system

path: /validate-multitenancy-example-net-v1beta1-tenancyfrontend

failurePolicy: Fail

name: vtenancyfrontend.kb.io

rules:

- apiGroups:

- multitenancy.example.net

apiVersions:

- v1beta1

operations:

- CREATE

- UPDATE

resources:

- tenancyfrontends

sideEffects: None

5. Configure some files to use the webhook and a conversion

I got following information from the blog post of Niklas Heidloff Configuring Webhooks for Kubernetes Operators.

Step 1: config.crd.kustomization.yaml file

- [WEBHOOK] To enable webhook, uncomment all the sections with [WEBHOOK] prefix. Patches here are for enabling the conversion webhook for each CRD

- patches/webhook_in_tenancyfrontends.yaml

- [CERTMANAGER] To enable cert-manager, uncomment all the sections with [CERTMANAGER] prefix. Patches here are for enabling the CA injection for each CRD

- patches/cainjection_in_tenancyfrontends.yaml

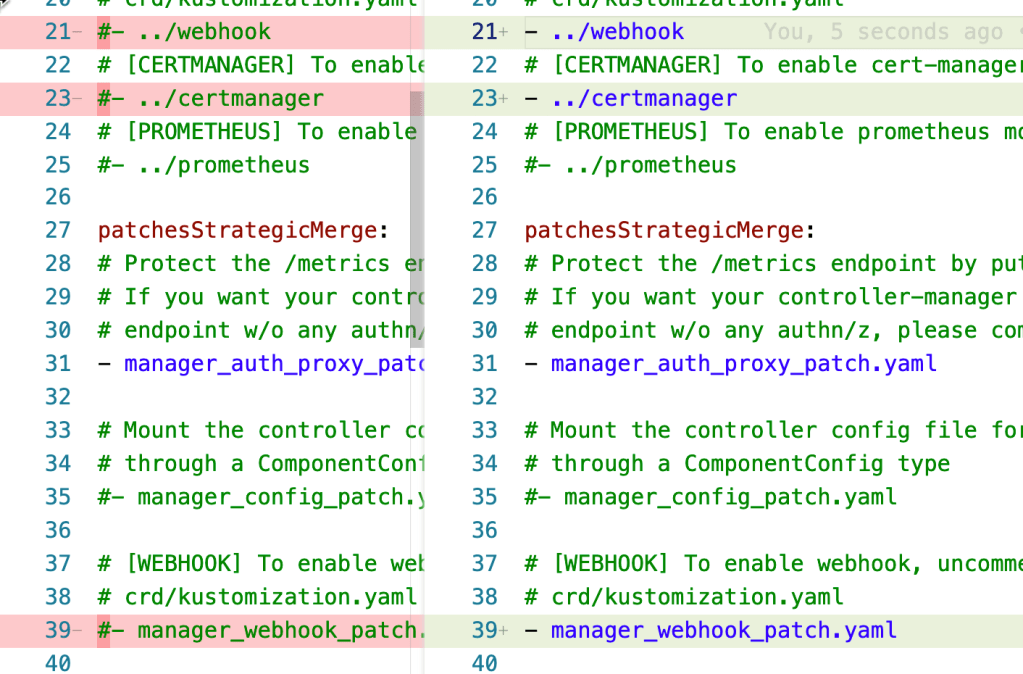

Step 2: config.default.kustomization.yaml file¶

We going to enable the webhook and the certmanager in that file we get the instructions to do this.

These are the change including the extracted comments:

- [WEBHOOK] To enable webhook, uncomment all the sections with [WEBHOOK] prefix including the one in

crd/kustomization.yaml

- ../webhook

- [CERTMANAGER] To enable cert-manager, uncomment all sections with ‘CERTMANAGER’. ‘WEBHOOK’ components are required.

- ../certmanager

- [WEBHOOK] To enable webhook, uncomment all the sections with [WEBHOOK] prefix including the one in crd/kustomization.yaml

- manager_webhook_patch.yaml

- [CERTMANAGER] To enable cert-manager, uncomment all sections with ‘CERTMANAGER’ prefix.

- name: CERTIFICATE_NAMESPACE # namespace of the certificate CR

objref:

kind: Certificate

group: cert-manager.io

version: v1

name: serving-cert # this name should match the one in certificate.yaml

fieldref:

fieldpath: metadata.namespace

- name: CERTIFICATE_NAME

objref:

kind: Certificate

group: cert-manager.io

version: v1

name: serving-cert # this name should match the one in certificate.yaml

- name: SERVICE_NAMESPACE # namespace of the service

objref:

kind: Service

version: v1

name: webhook-service

fieldref:

fieldpath: metadata.namespace

- name: SERVICE_NAME

objref:

kind: Service

version: v1

name: webhook-service

Step 3: Install a cert-manager

To ensure that the webhook works with the certificates we need to install a cert-manager on our Kubernetes cluster.

kubectl apply --validate=false -f https://github.com/jetstack/cert-manager/releases/download/v1.7.2/cert-manager.yaml

Note: cert-manager releases

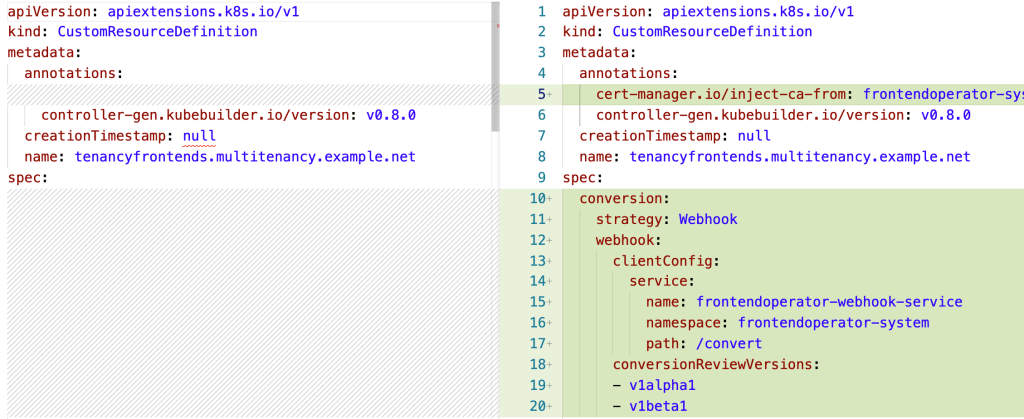

Step 4: Add the relevant versions for the conversion webhook

Now we add the relevant versions for the conversion webhook . The following patch enables a conversion webhook for the CRD. (frontendOperator.config.crd.patches.webhook_in_tenancyfrontends.yaml)

v1alpha1v1beta1

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: tenancyfrontends.multitenancy.example.net

spec:

conversion:

strategy: Webhook

webhook:

clientConfig:

service:

namespace: system

name: webhook-service

path: /convert

conversionReviewVersions:

- v1alpha1

- v1beta1

Step 5: Ensure the right mainfests will be used later.

Comment out - manifests.yaml to ensure the right mainfests will be used later.

(frontendOperator.config.webhook.kustomization.yaml)

resources:

# - manifests.yaml

- service.yaml

configurations:

- kustomizeconfig.yaml

5. Create and push controller-manager image to a container registry

Now we will build the image using the makefile. We use Quay.io as our container registry for the container image.

Step 1: Login to Quay.io

docker login quay.io

Step 2: Use a custom container name

export REGISTRY='quay.io'

export ORG='tsuedbroecker'

export CONTROLLER_IMAGE='frontendcontroller:v5'

Step 3: Build the container using the make file

make generate

make manifests

make docker-build IMG="$REGISTRY/$ORG/$CONTROLLER_IMAGE"

Step 4: Push the container to the container registry

docker push "$REGISTRY/$ORG/$CONTROLLER_IMAGE"

6. Create a bundle image

Step 1: Create a bundle

We define the location of the existing controller-manager image for the operator with the IMG parameter and VERSION=0.0.3 we define an input for the makefile that we create the next bundle.

Execute the commands:

export VERSION=0.0.3

make bundle IMG="$REGISTRY/$ORG/$CONTROLLER_IMAGE"

Now the bundle contains the information for the webhook, as you see in the image below.

Step 2: Create a bundle image

Set the custom container name (the default of the Multi Tenancy Frontend Operator don’t fit for an default usage of the make file )

export BUNDLE_IMAGE='bundlefrontendoperator:v4'

make bundle-build BUNDLE_IMG="$REGISTRY/$ORG/$BUNDLE_IMAGE"

Optional: We can run the operator as a deployment:

operator-sdk run bundle "$REGISTRY/$ORG/$BUNDLE_IMAGE" -n operators

Step 3: Push the bundle image and validate the bundle

docker push "$REGISTRY/$ORG/$BUNDLE_IMAGE"

operator-sdk bundle validate "$REGISTRY/$ORG/$BUNDLE_IMAGE"

7. Create and push a new catalog image to a container registry

Step 1: Create a catalog image

export CATALOG_IMAGE=frontend-catalog

export CATALOG_TAG=v0.0.3

make catalog-build CATALOG_IMG="$REGISTRY/$ORG/$CATALOG_IMAGE:$CATALOG_TAG" BUNDLE_IMGS="$REGISTRY/$ORG/$BUNDLE_IMAGE"

Step 2: Push the catalog image to a container registry

docker push "$REGISTRY/$ORG/$CATALOG_IMAGE:$CATALOG_TAG"

8. Update the Catalog source and the Subscription specification

Ensure the Operator Lifecycle Manager (OLM) and a cert-manager is installed on your Kubernetes cluster.

kubectl apply --validate=false -f https://github.com/jetstack/cert-manager/releases/download/v1.7.2/cert-manager.yaml

operator-sdk olm install latest

Step 1: Define the catalog source specification

Update the file called olm-configuration/catalogsource.yaml and past the content of yaml below into that file. As we see the CatalogSource references the quay.io/tsuedbroecker/frontend-catalog:v0.0.3 image we created before.

apiVersion: operators.coreos.com/v1alpha1

kind: CatalogSource

metadata:

name: frontend-operator-catalog

namespace: operators

spec:

displayName: Frontend Operator Catalog

publisher: Thomas Suedbroecker

sourceType: grpc

image: quay.io/tsuedbroecker/frontend-catalog:v0.0.3

updateStrategy:

registryPoll:

interval: 10m

- Apply that

CatalogSourceto the cluster.

kubectl apply -f olm-configuration/catalogsource.yaml -n operators

- Verify the

CatalogSource.

kubectl get catalogsource -n operators

- Example output:

NAME DISPLAY TYPE PUBLISHER AGE

frontend-operator-catalog Frontend Operator grpc Thomas Suedbroecker 46m

Step 2: Define the subscription source

Here we need to add the installPlanApproval: Manual compared to the last blog post for the initial version we had. (olm-configuration/subscription.yaml)

apiVersion: operators.coreos.com/v1alpha1

kind: Subscription

metadata:

name: frontendoperator-v0-0-3-sub

namespace: operators

spec:

channel: beta

name: frontendoperator

source: frontend-operator-catalog

sourceNamespace: operators

installPlanApproval: Manual

- Apply the

Subscriptionto the cluster.

kubectl apply -f olm-configuration/subscription.yaml -n operators

- Verify the

Subscription.

kubectl get subscription -n operators

- Example output:

NAME PACKAGE SOURCE CHANNEL

frontendoperator-v0-0-3-sub frontendoperator frontend-operator-catalog alpha

Step 3: Install the operator

- Get the install plan

PLAN=$(kubectl get installplan -n operators | grep 'install' | awk '{print $1;}')

echo "$PLAN"

- Approve the installation

kubectl -n operators patch installplan $PLAN -p '{"spec":{"approved":true}}' --type merge

Example output:

installplan.operators.coreos.com/install-zzwdn patched

- Verify the installation

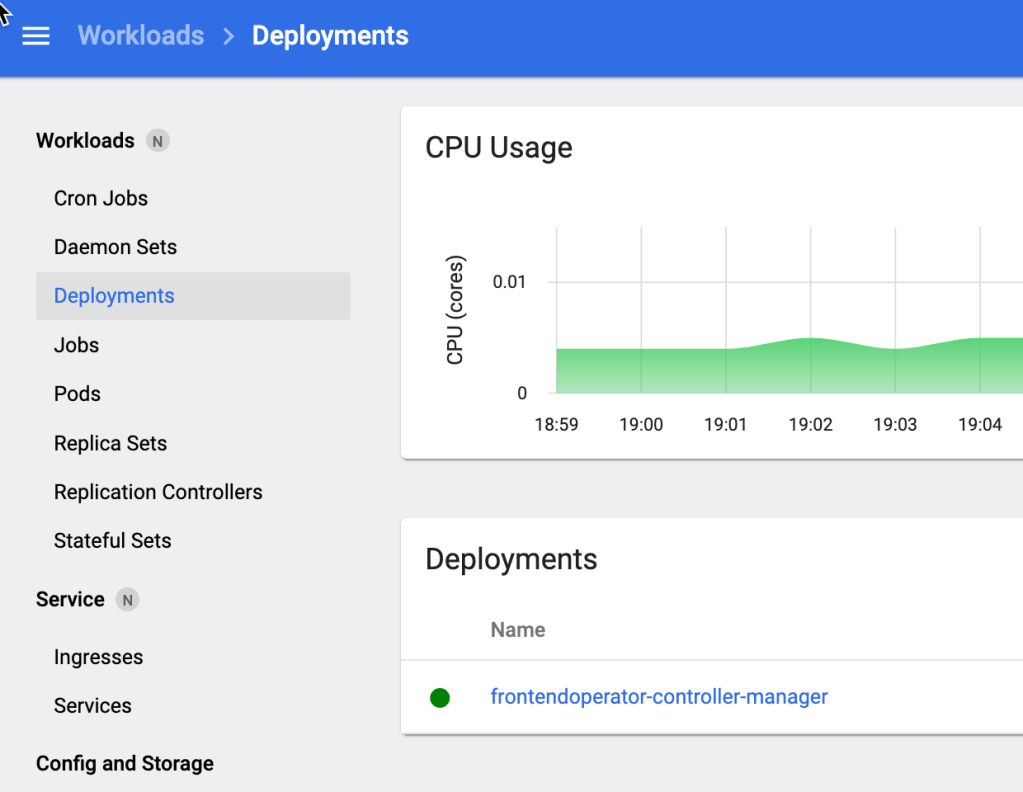

kubectl get deployments -n operators | grep "frontend"

Example output:

frontendoperator-controller-manager 1/1 1 1 28m

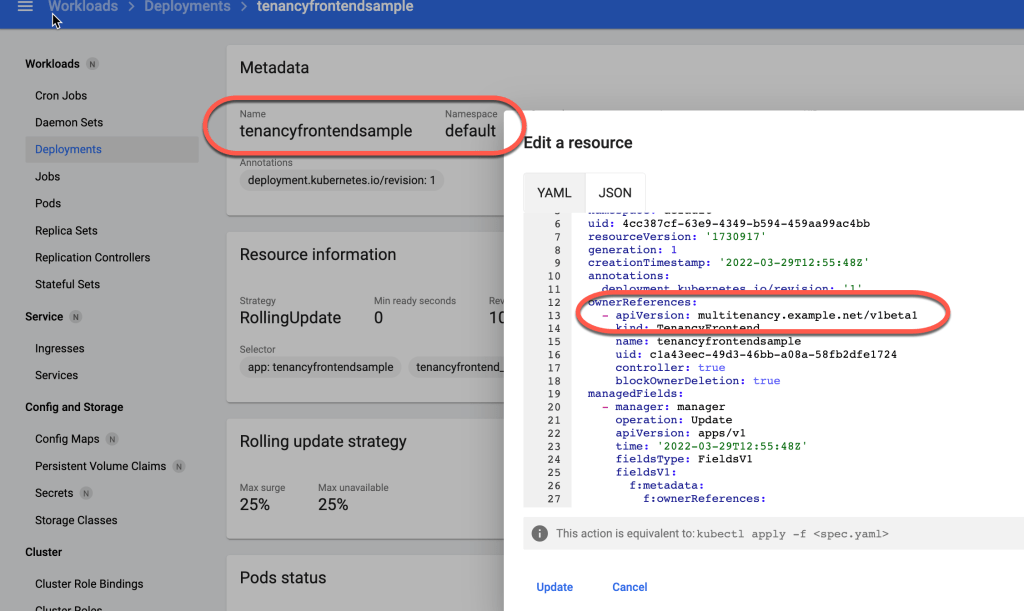

The image below shows it in the Kubernetes dashboard.

9. Create and verify an instance of the web application based on the new operator API version

Step 1: Create

kubectl apply -f config/samples/multitenancy_v1alpha1_tenancyfrontend.yaml -n default

REMEMBER: That’s old format for version

v1alpha1

apiVersion: multitenancy.example.net/v1alpha1

kind: TenancyFrontend

metadata:

name: tenancyfrontendsample

spec:

# TODO(user): Add fields here

size: 1

displayname: MyFrontendDisplayname

- Example output:

Here you see we used the API format v1alpha1 and it was converted to v1beta1. You also see that catalogname contains the default value ‘Movies‘. The displayname was shorten to 15 characters MyFrontendDispl vs MyFrontendDisplayname, all as we have defined it for the v1beta1 API conversion.

catalogname: Movies

displayname: MyFrontendDispl

size: 1

kubectl get tenancyfrontend -oyaml

apiVersion: v1

items:

- apiVersion: multitenancy.example.net/v1beta1

kind: TenancyFrontend

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"multitenancy.example.net/v1alpha1","kind":"TenancyFrontend","metadata":{"annotations":{},"name":"tenancyfrontendsample","namespace":"default"},"spec":{"displayname":"MyFrontendDisplayname","size":1}}

creationTimestamp: "2022-03-29T12:55:48Z"

generation: 1

name: tenancyfrontendsample

namespace: default

resourceVersion: "1730812"

uid: c1a43eec-49d3-46bb-a08a-58fb2dfe1724

spec:

catalogname: Movies

displayname: MyFrontendDispl

size: 1

status: {}

kind: List

metadata:

resourceVersion: ""

selfLink: ""

Here we see the annotation kubectl.kubernetes.io/last-applied-configuration in the output.

kubectl.kubernetes.io/last-applied-configuration: |<br>{"apiVersion":"multitenancy.example.net/v1alpha1","kind":"TenancyFrontend","metadata":{"annotations":{},"name":"tenancyfrontendsample","namespace":"default"},"spec":{"displayname":"MyFrontendDisplayname","size":1}}

Remember the blog post from Niklas Heidloff called Converting Custom Resource Versions in Operators. He covers the topic how to use it.

The image below shows also the version v1beta1 was created and saved, but the v1alpha1 was accepted for the creation.

Step 2: Verify¶

kubectl get customresourcedefinition -n default | grep "frontend"

kubectl get tenancyfrontend -n default | grep "frontend"

kubectl get deployment -n default | grep "frontend"

kubectl get service -n default | grep "frontend"

kubectl get pod -n default | grep "frontend"

- Example output:

tenancyfrontends.multitenancy.example.net 2022-03-24

tenancyfrontend-sample 42s

tenancyfrontend-sample 1/1 1 1 42s

tenancyfrontend-sample NodePort 172.21.17.232 <none> 8080:30640/TCP 43s

tenancyfrontend-sampleclusterip ClusterIP 172.21.95.161 <none> 80/TCP 43s

tenancyfrontend-sample-5858f8d9f6-nzzgs 1/1 Running 0 44s

The gif below shows the finial status of the operator.

Summary

The conversion webhook implementation also with the Operator SDK is not not trivial there is a lot you should take care of. It is useful when you take a look into that project Kubernetes Operator Samples using Go, the Operator SDK and OLM. That project will contain many useful information related to Go operator development.

I hope this was useful to you and let’s see what’s next?

Greetings,

Thomas

#olm, #operatorsdk, #kubernetes, #bundle, #operator, #golong, #opm, #docker, #makefile, #operatorlearningjourney, #webhook, #conversion