I wrote this blog post while preparing my session for the Al Conference Vision & Impact, titled “AI changes the Software Development Lifecycle (SDLC) drastically: from coding to review.”

While preparing the talk, I started reflecting on a fundamental question: how do we review software that is increasingly generated by AI?

Table of contents

- Introduction

- The Complexity Did Not Disappear

- A New Question: How Do We Review AI-Generated Systems?

- Using AI to Review AI

- Trust, Risk and Responsibility

- Are Traditional Review Criteria Still Valid?

- Data Still Defines the Outcome

- Architecture and Regulations Are Moving Targets

- Where We Are Today

- Summary

- References

1. Introduction

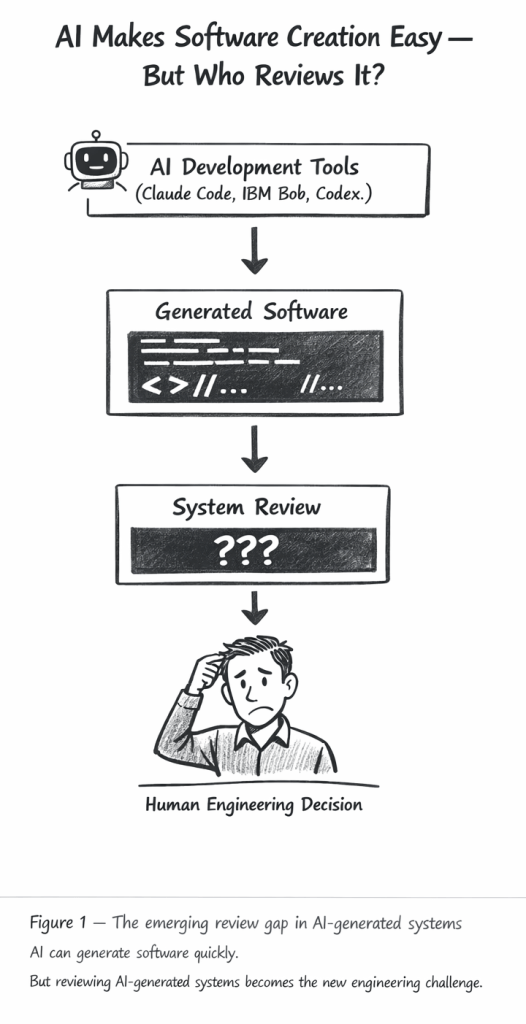

AI development tools such as IBM Bob, Claude Code, Codex, Cursor and similar AI systems make it extremely easy to generate software.

Tasks that previously required hours or days of manual work can now be created in minutes.

What started as vibe coding has opened the door to a new way of building software.

But generating code is no longer the main challenge.

The real challenge is turning generated code into structured, maintainable, and trustworthy systems.

This leads to a fundamental question:

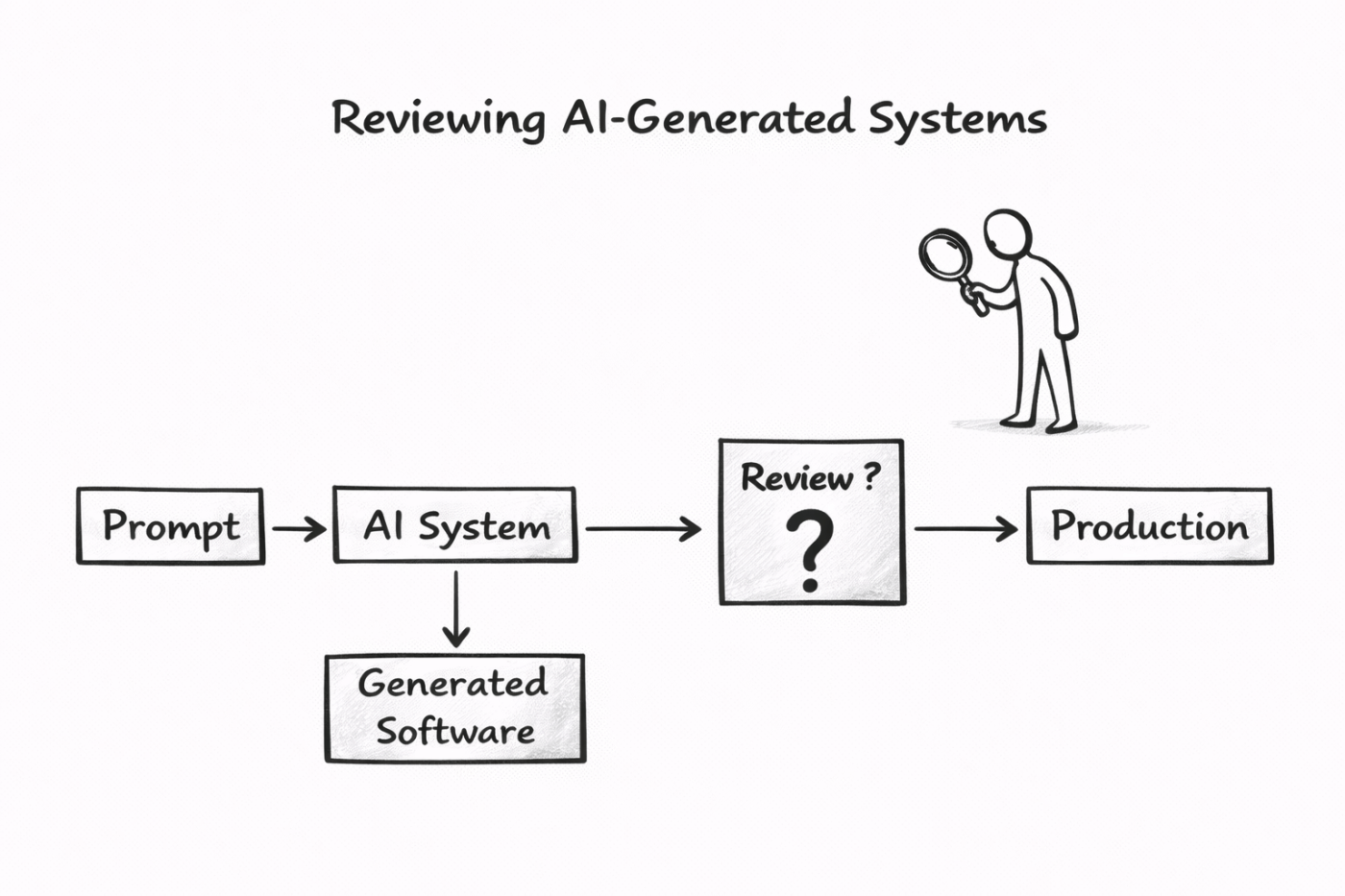

How do we review systems that are partially or fully generated by AI?

Because a paradox appears quickly:

If AI systems review AI-generated systems, we may implicitly approve software that no human fully understands.

To understand this challenge, we first need to look at something that AI has not removed: complexity.

2. The Complexity Did Not Disappear

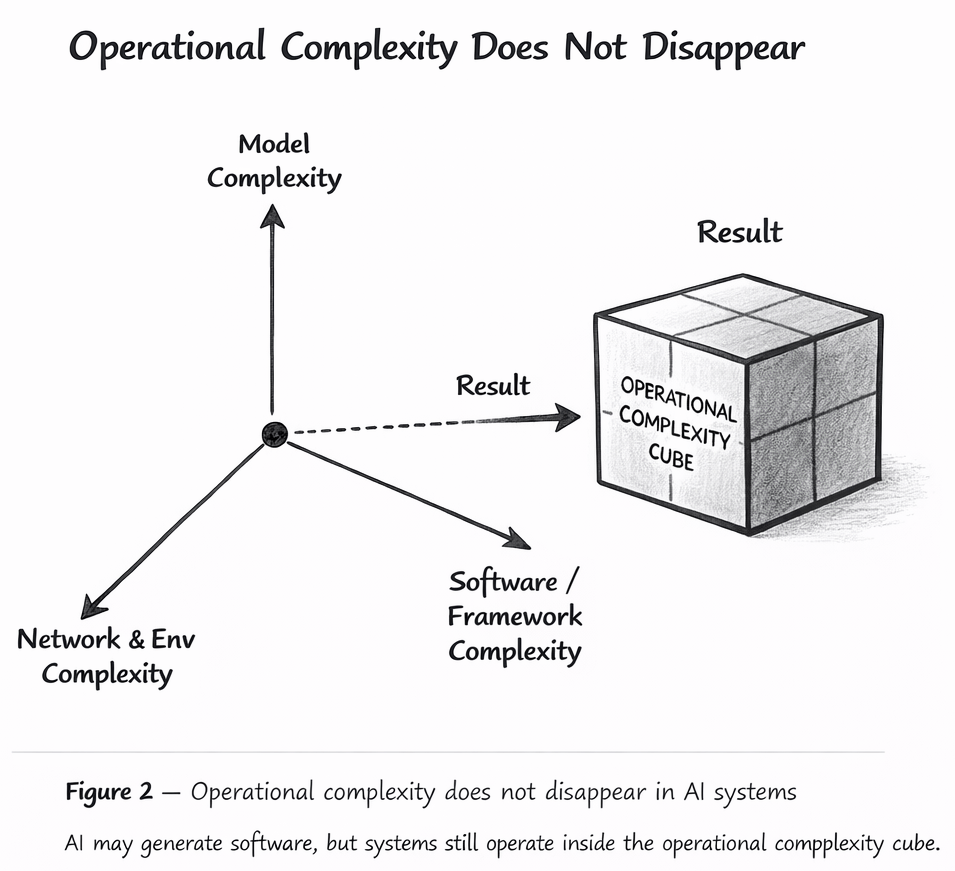

Even if the creation of software becomes easier, the operational environment in which systems must run remains complex.

Systems still depend on:

- infrastructure

- networks

- libraries and version dependencies

- APIs and service integrations

- deployment environments

In earlier work I described this challenge as the Operational Complexity Cube.

The idea behind this model is that modern systems are influenced by multiple dimensions of complexity at the same time. AI may change how software is created, but it does not remove these dimensions of complexity.

AI-generated systems still need to run inside the same operational environments.

In other words:

AI simplifies writing software, but it does not simplify running software.

AI introduces a new dimension. I use this model because agents and other AI-driven software systems are built around and encapsulate these models.

Reference

Operational Complexity Cube — Thomas Südbröcker

https://suedbroecker.net

3. A New Question: How Do We Review AI-Generated Systems?

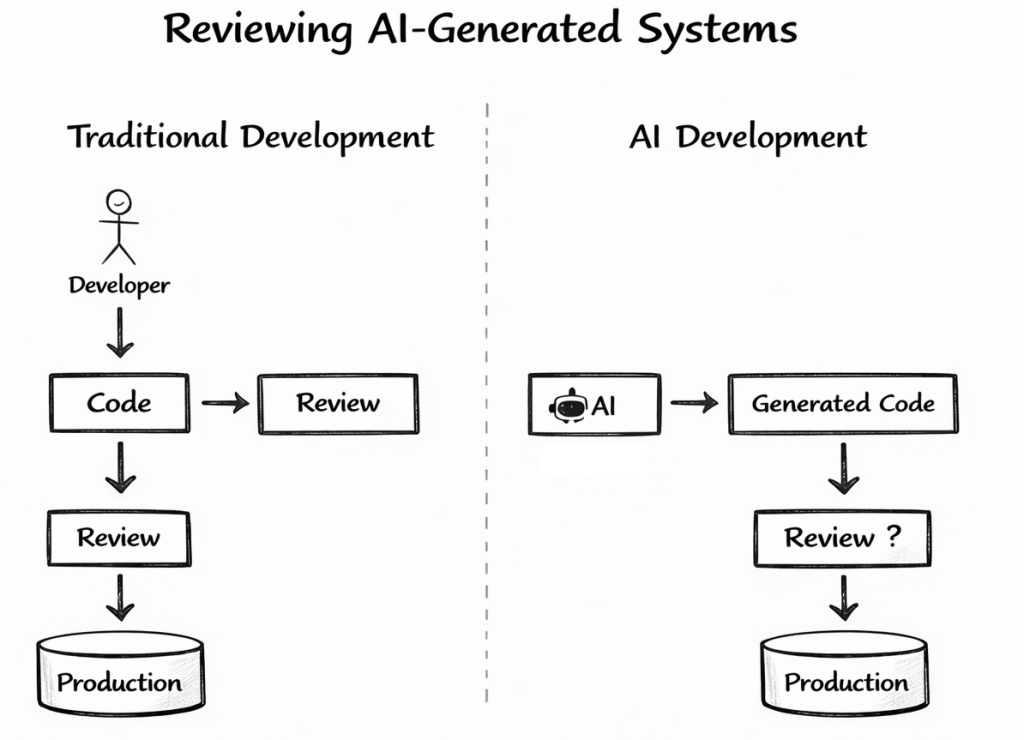

Traditional software engineering relies on several review mechanisms:

- code reviews

- architectural reviews

- testing pipelines

- operational readiness checks

Many organizations also use architecture review boards or structured architecture evaluation methods. However, AI introduces a new dimension. These older review mechanisms assume that humans fully understand the system they built.

Sometimes large parts of a system are generated automatically.

In some cases it may even be difficult for a human to read and fully understand everything that was generated.

This leads to a fundamental question:

How do we review systems generated with AI assistance?

4. Using AI to Review AI

One obvious idea is to use AI systems to review AI-generated artifacts.

This introduces a recursive situation: AI generates artifacts that AI must then evaluate.

Recent research and engineering practices already explore concepts such as:

- Model-as-a-Judge

- automated evaluation frameworks

- AI-assisted architecture validation

- prompt-based system reviews

These approaches suggest that AI could help analyze:

- generated code

- architectural structures

- documentation

- configuration files

However, this approach raises another important question.

If AI reviews AI-generated systems:

How much can we trust the results?

5. Trust, Risk and Responsibility

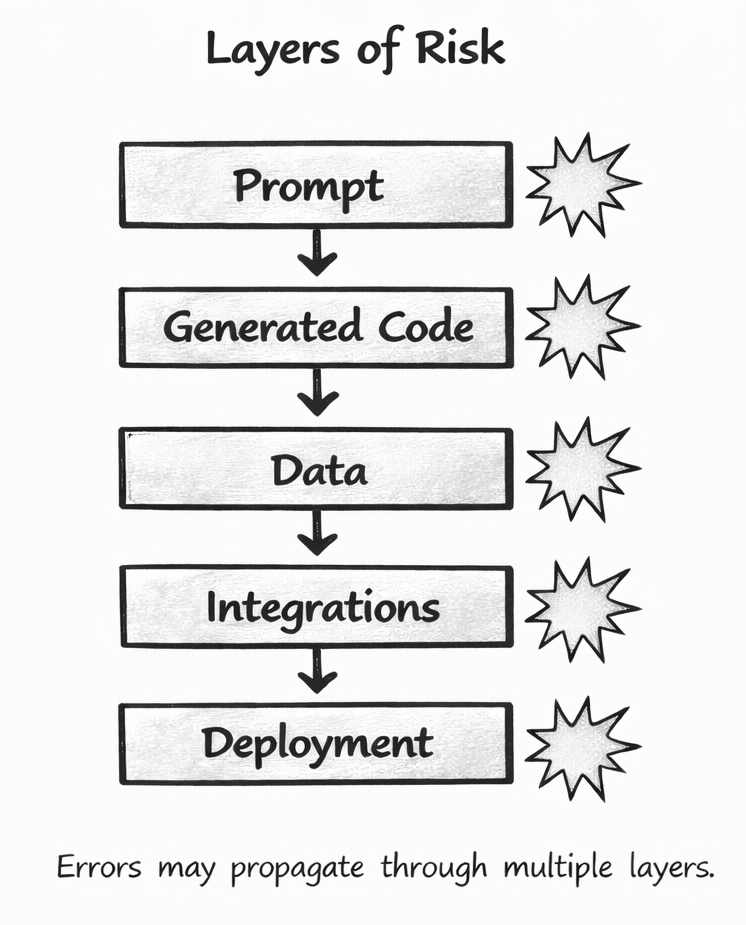

Even highly capable models are not perfect.

Many systems deliver results that can appear correct in 90–95% of cases, but complex systems can still contain hidden problems.

Errors may propagate across multiple layers:

- prompts

- generated code

- data pipelines

- integrations between services

Eventually, someone must decide whether a system is ready for production.

AI systems cannot carry responsibility.

The responsibility always remains with the humans and organizations operating the system.

In earlier work I explored this challenge from a slightly different perspective.

Instead of expecting AI systems to behave deterministically, it may be more realistic to evaluate them through risk-aware trust.

The key question therefore becomes:

Is the system trustworthy enough for the risk level of the use case?

Reference

Thomas Südbröcker

It’s All About Risk-Taking: Why “Trustworthy” Beats “Deterministic” in the Era of Agentic AI

https://suedbroecker.net/2025/10/23/its-all-about-risk-taking-why-trustworthy-beats-deterministic-in-the-era-of-agentic-ai/

6. Are Traditional Review Criteria Still Valid?

Most existing review processes were designed for human-written software.

Examples include:

- architecture review boards

- security reviews

- operational readiness checks

- structured architecture evaluation methods

But when software is increasingly generated by AI, a natural question arises:

Are these criteria still sufficient?

Or do we need new review dimensions that specifically address:

- AI-generated artifacts

- prompt engineering

- model behavior

- automated code generation workflows

At the moment, the most pragmatic approach may be to start with existing engineering practices and gradually extend them.

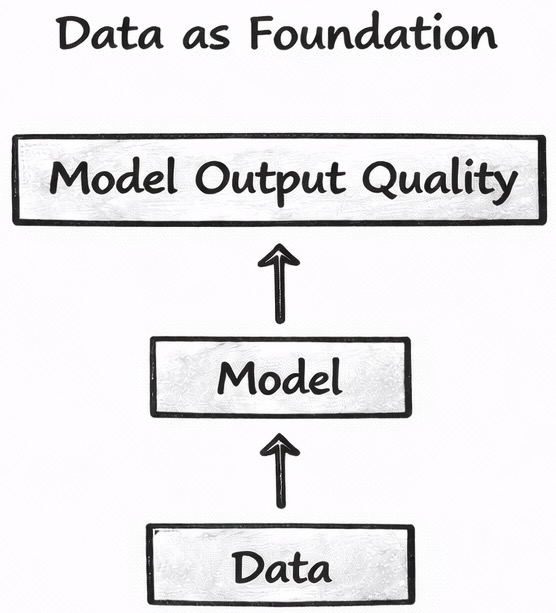

7. Data Still Defines the Outcome

Another important factor that should not be forgotten is data. Even the best review process cannot compensate for poor data.

Every software system ultimately depends on the data it processes.

For AI systems this becomes even more critical.

The quality of the results depends heavily on:

- data quality

- data completeness

- representativeness of the data

- potential bias in the data

A serious review of AI-driven systems therefore must also include a review of the data foundation.

Without suitable data, even the most advanced models cannot deliver reliable results.

8. Architecture and Regulations Are Moving Targets

Architecture quality is not static.

What was considered a good architecture in the monolith era may not be acceptable for modern distributed or cloud-native systems.

Architectural standards evolve as technologies and infrastructures change.

In addition, legal and regulatory frameworks around AI are evolving rapidly.

Questions related to:

- intellectual property

- liability

- ownership of generated artifacts

- responsibility for AI-generated software

are still actively being discussed.

In earlier work I explored some of these questions in the context of AI-generated software and global intellectual property law.

These legal developments introduce another dimension that must be considered when reviewing AI-driven systems.

Reference

Thomas Südbröcker

AI-Generated Software, Patents and Global IP Law: A First Deep Dive Using AI

https://suedbroecker.net/2025/11/19/ai-generated-software-patents-and-global-ip-law-a-first-deep-dive-using-ai/

9. Where We Are Today

The honest answer is:

We do not yet have a complete solution.

Many engineers coming from classical software development are still adapting to the experimental nature of AI systems.

AI development often involves:

- experimentation

- iteration

- trying different approaches

This is different from traditional engineering practices, which often emphasize strict processes and predictability.

At the moment, the most practical approach may be:

- start with existing review methods

- extend them step by step

- experiment with AI-assisted evaluation techniques

This transition phase requires both experimentation and responsible engineering practices.

10. Summary

AI tools are making software generation easier than ever.

But building trustworthy systems remains difficult.

- Complexity still exists.

- Data still matters.

- Architecture still evolves.

- Regulations are still emerging.

What is changing is the way software is created — and therefore also the way it must be reviewed.

AI may help with these reviews.

But responsibility cannot disappear.

Engineers and organizations still decide whether a system is safe enough to run in the real world.

And one principle should remain clear:

If AI systems review AI-generated systems, we may implicitly approve software that no human fully understands.

Approving the unknown has never been a safe strategy for engineering systems. And that is exactly the challenge engineering teams must solve in the age of AI-generated systems.

11. References

Operational Complexity Cube — Thomas Südbröcker

https://suedbroecker.net

Thomas Südbröcker

It’s All About Risk-Taking: Why “Trustworthy” Beats “Deterministic” in the Era of Agentic AI

https://suedbroecker.net/2025/10/23/its-all-about-risk-taking-why-trustworthy-beats-deterministic-in-the-era-of-agentic-ai/

Thomas Südbröcker

AI-Generated Software, Patents and Global IP Law: A First Deep Dive Using AI

https://suedbroecker.net/2025/11/19/ai-generated-software-patents-and-global-ip-law-a-first-deep-dive-using-ai/

Karpathy, A.

Software 2.0

https://karpathy.medium.com/software-2-0-a64152b37c35

NIST

AI Risk Management Framework

https://www.nist.gov/itl/ai-risk-management-framework

Zheng et al.

Judging LLM-as-a-Judge

https://arxiv.org/abs/2306.05685

CMU SEI

Architecture Tradeoff Analysis Method (ATAM)

https://resources.sei.cmu.edu/library/asset-view.cfm?assetid=513841

xGoogle

Machine Learning Crash Course — Data Bias

https://developers.google.com/machine-learning/crash-course

Note: This post reflects my own ideas and experience; AI was used only as a writing and thinking aid to help structure and clarify the arguments, not to define them.

#ai, #artificialintelligence, #softwareengineering, #sdlc, #aiagents, #agenticai, #generativeai, #aidevelopment, #aigeneratedcode, #codereview, #softwarereview, #softwarearchitecture, #trustworthyai, #aiethics, #airesponsibility, #riskmanagement, #datagovernance, #aigovernance, #aiquality, #engineering, #devops, #mlops

Leave a comment