This blog post is about to deploy the IBM Watson Natural Language Processing Library for Embed to an IBM Cloud Kubernetes cluster in a Virtual Private Cloud (VPC) environment and is related to my blog post Run Watson NLP for Embed on IBM Cloud Code Engine. IBM Cloud Kubernetes cluster is a “certified, managed Kubernetes solution, built for creating a cluster of compute hosts to deploy and manage containerized apps on IBM Cloud“.

The IBM Watson Libraries for Embed are made for IBM Business Partners. Partners can get additional details about embeddable AI on the IBM Partner World page. If you are an IBM Business Partner you can get a free access to the IBM Watson Natural Language Processing Library for Embed.

To get started with the libraries you can use the link Watson Natural Language Processing Library for Embed. It is an awesome documentation, and it is public available.

I used parts of the great content of the IBM Watson documentation for my Kubernetes example. I created a GitHub project with some additional bash scripting. The project is called Run Watson NLP for Embed on an IBM Cloud Kubernetes cluster.

This project has two objectives.

- Create an IBM Cloud Kubernetes cluster in a

Virtual Private Cloud(VPC) environment with Terraform - Deploy

Watson NLP for embedto the created cluster with Helm

The blog post is structured in:

- Some simplified technical basics about IBM Watson Libraries for Embed

- Simplified overview of the dependencies for the running

Watson NLP for Embed containeron anIBM Cloud Kubernetes clusterin aVPC environment - Some screen shots from a running example by using an IBM Cloud Kubernetes cluster in a

VPC environment - Setup of the example

- Summary

1. Some simplified technical basics about IBM Watson Libraries for Embed

The IBM Watson Libraries for Embed do provide a lot of pre-trained models you can find in the related model catalog for the Watson Libraries. Here is a link to the model catalog for Watson NLP, the catalog is public available.

There are runtime containers for the AI functionalities like NLP, Speech to Text and so on. You can integrate the container into your application and customize them, for example existing pre-trained models, but it’s also possible to use own created models. By separating the container runtime and the models to be loaded into the container, we are free to include the right models we need to integrate in our application to fulfil the needs of our business use case.

The resources are available in a IBM Cloud Container Registry. The image below shows a simplified overview.

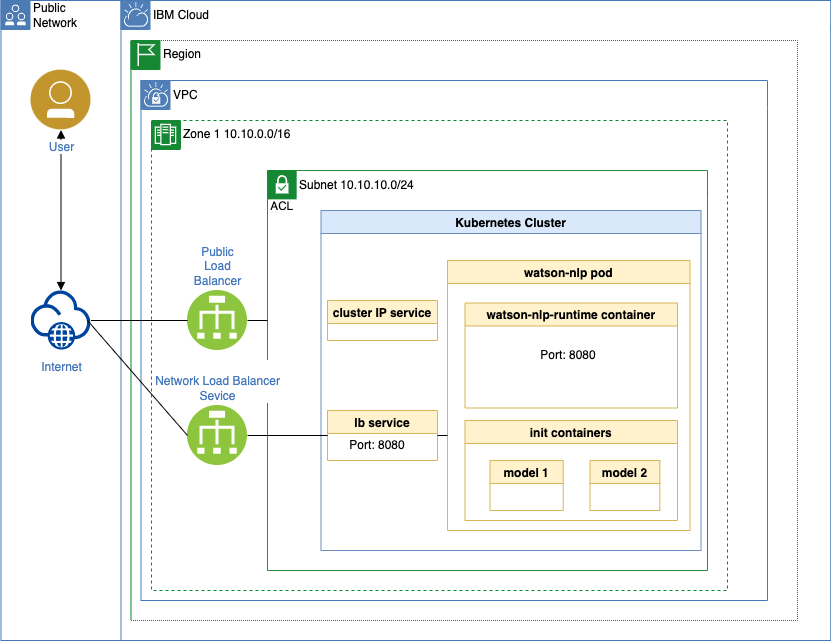

2. Simplified overview of the dependencies for the running Watson NLP for embed container on an IBM Cloud Kubernetes cluster in a VPC environment

The next image below shows a simplified overview of the dependencies of the running Watson NLP pod on the Kubernetes cluster. Which is a bit different from the detailed instructions in the official IBM documentation Run with Kubernetes. The difference is that it contains an additional Kubernetes load balancer service configuration for an IBM Cloud VPC.

The deployment you can see above is managed with a Helm chart and the objectives are:

1. To provide a load balancer service for a direct access from the internet to Watson NLP for embed. The cluster ip service doesn’t provide this in the VPC environment.

2. Create a pull secret specification by using a Helm chart and not by using a kubectl command.

The Helm chart contains following specifications (the links are pointing to the source code), again this configuration is a bit different to the IBM documentation Run with Kubernetes.

- Watson NLP for Embed – Deployment This deployment uses the container port

8080and not8085. - Pull secret This pull secret specification will be automatically be created by the bash automation and doesn’t exist in the Run with Kubernetes documentation.

- Cluster IP service This uses uses the port

8080and not8085. - Load balancer service This

servicespecification doesn’t exist in the “Run with Kubernetes” IBM documentation.

3. Some screen shots from a running example by using an IBM Cloud Kubernetes cluster in a VPC environment

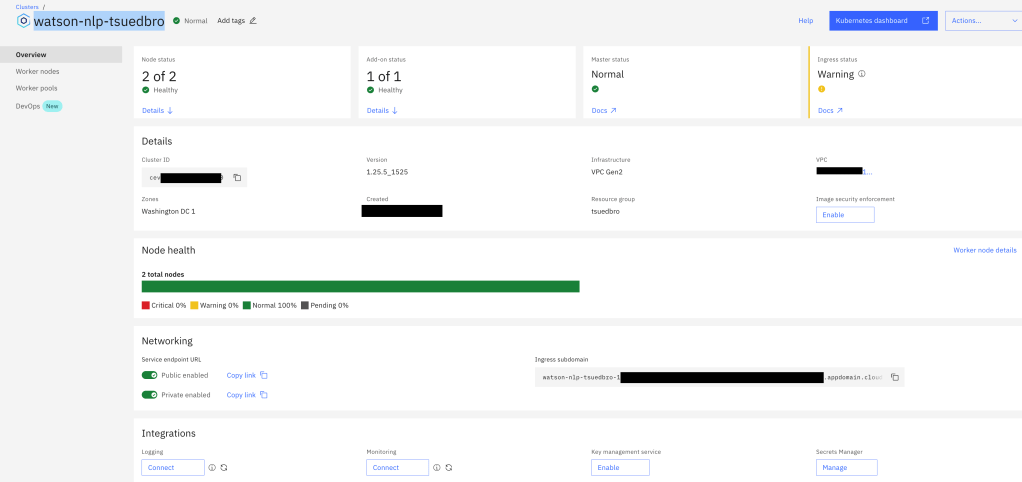

- Kubernetes cluster

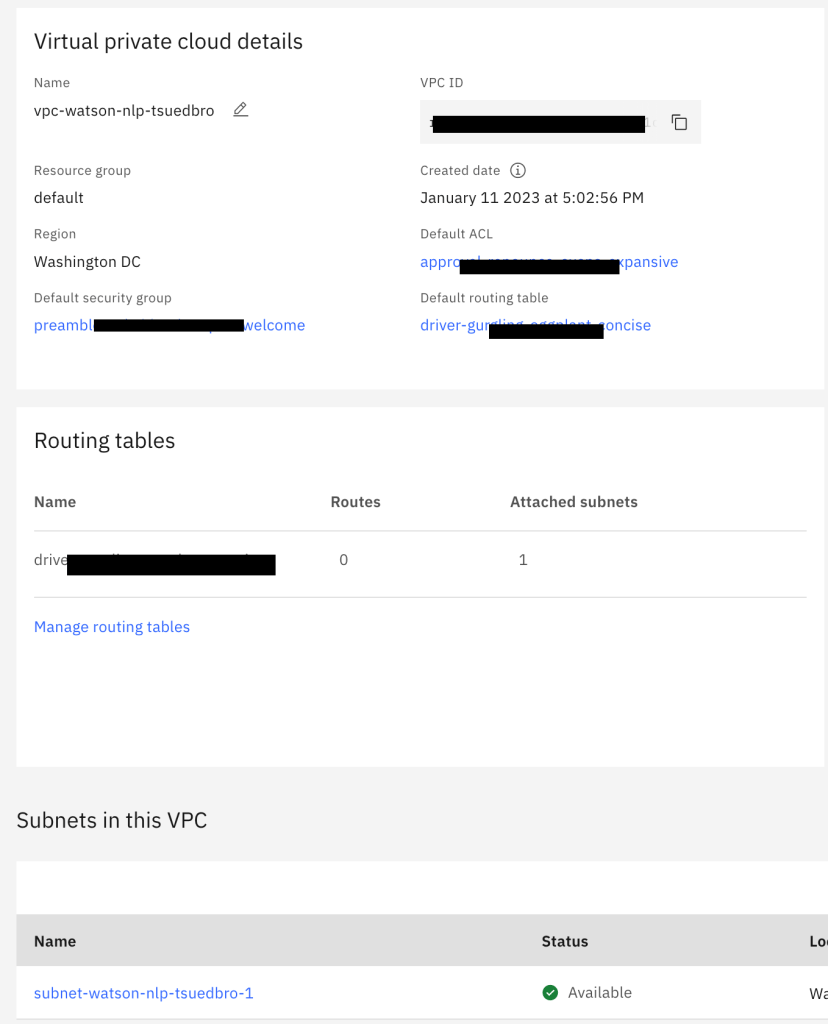

- Virtual Private Cloud

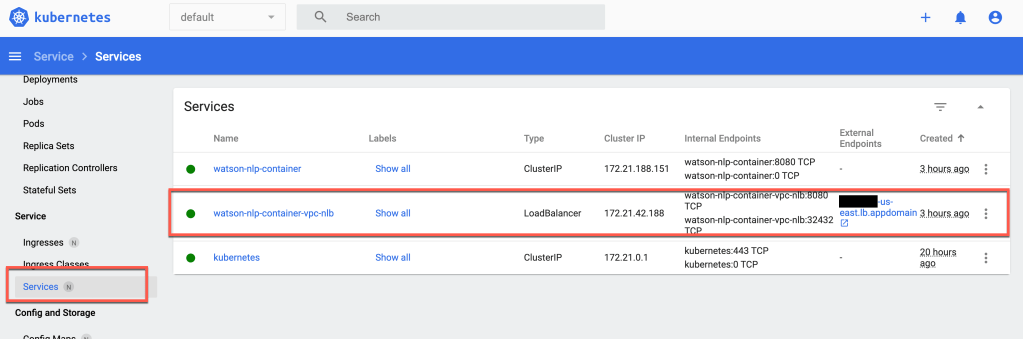

- The

load balancer servicein Kubernetes

- The

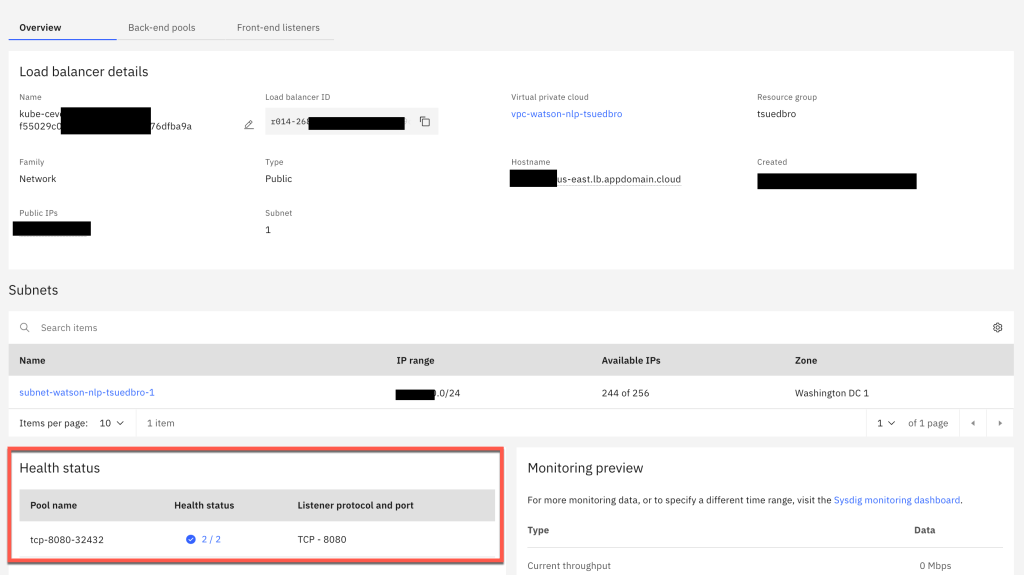

load balancer servicein Kubernetes creates anetwork load balancerin the IBM Cloud VPC

- Running pod on Kubernetes

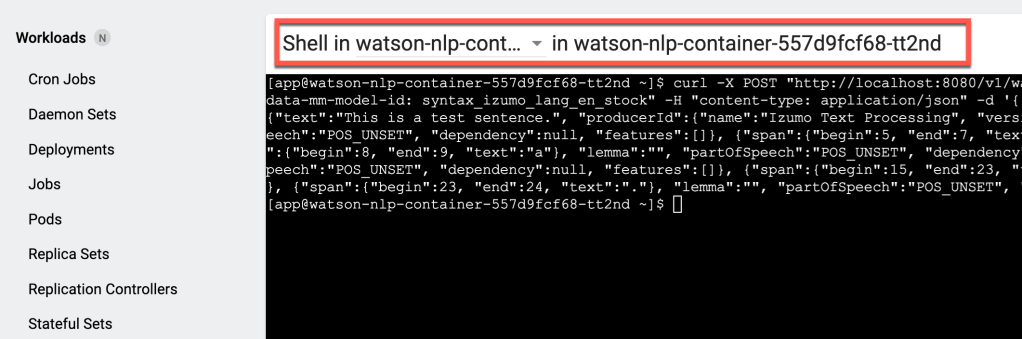

- Access the container in the pod on Kubernetes

4. Setup of the example

The example setup in the GitHub project Run Watson NLP for Embed on an IBM Cloud Kubernetes cluster contains two bash automations:

Step 4.1: Clone the repository

git clone https://github.com/thomassuedbroecker/terraform-vpc-kubernetes-watson-nlp.git

cd terraform-vpc-kubernetes-watson-nlp

4.1 Terraform: Automation to create the Kubernetes cluster and VPC on IBM Cloud

Step 4.1.1: Navigate to the terraform_setup

cd code/terraform_setup

Step 4.1.2: Create a .env file

cat .env_template > .env

Step 4.1.3: Add an IBM Cloud access key to your local .env file

nano .env

The content of the file:

export IC_API_KEY=YOUR_IBM_CLOUD_ACCESS_KEY

export REGION="us-east"

export GROUP="tsuedbro"

Step 4.1.4: Verify the global variables in the bash script automation

Inspect the bash automation create_vpc_kubernetes_cluster_with_terraform.sh and adjust the values to your needs.

nano create_vpc_kubernetes_cluster_with_terraform.sh

#export TF_LOG=debug

export TF_VAR_flavor="bx2.4x16"

export TF_VAR_worker_count="2"

export TF_VAR_kubernetes_pricing="tiered-pricing"

export TF_VAR_resource_group=$GROUP

export TF_VAR_vpc_name="watson-nlp-tsuedbro"

export TF_VAR_region=$REGION

export TF_VAR_kube_version="1.25.5"

export TF_VAR_cluster_name="watson-nlp-tsuedbro"

Step 4.1.5: Execute the bash automation

The creation can take up to 1 hour, depending on the region you use.

sh create_vpc_kubernetes_cluster_with_terraform.sh

- Example output:

...

Apply complete! Resources: 5 added, 0 changed, 0 destroyed.

*********************************

4.2 Helm: Automation to deploy Watson NLP embed

Step 4.2.1: Navigate to the helm_setup

cd code/helm_setup

Step 4.2.2: Create a .env file

cat .env_template > .env

Step 4.2.3: Add an IBM Cloud access key to your local .env file

export IC_API_KEY=YOUR_IBM_CLOUD_ACCESS_KEY

export IBM_ENTITLEMENT_KEY="YOUR_KEY"

export IBM_ENTITLEMENT_EMAIL="YOUR_EMAIL"

export CLUSTER_ID="YOUR_CLUSTER"

export REGION="us-east"

export GROUP="tsuedbro"

Step 4.2.4: Execute the bash automation

sh deploy-watson-nlp-to-kubernetes.sh

The bash script executes following steps and the links are pointing to the relevant function in the bash automation:

- It logs on to IBM Cloud with an IBM Cloud API key.

- It ensures that is connected to the cluster.

- It creates a

Docker Config Filewhich will be used to create a container pull secret. - It installs the Helm chart for Watson NLP embed configured for REST API usage.

- It verifies that the container is running and invokes a REST API call inside the

runtime-containerof Watson NLP embed. - It verifies that the exposed Kubernetes

URLwith aload balancer serviceis working and invokes the same REST API call as before from the local machine.

Example output:

*********************

loginIBMCloud

*********************

...

*********************

connectToCluster

*********************

OK

...

*********************

createDockerCustomConfigFile

*********************

IBM_ENTITLEMENT_SECRET:

...

*********************

installHelmChart

*********************

...

*********************

verifyDeploment

*********************

------------------------------------------------------------------------

Check watson-nlp-container

Status: watson-nlp-container

2023-01-12 09:43:32 Status: watson-nlp-container is created

------------------------------------------------------------------------

*********************

verifyPod could up to take 10 min

*********************

------------------------------------------------------------------------

Check watson-nlp-container

Status: 0/1

2023-01-12 09:43:32 Status: watson-nlp-container(0/1)

------------------------------------------------------------------------

Status: 0/1

2023-01-12 09:44:33 Status: watson-nlp-container(0/1)

------------------------------------------------------------------------

Status: 1/1

2023-01-12 09:45:34 Status: watson-nlp-container is created

------------------------------------------------------------------------

*********************

verifyWatsonNLPContainer

*********************

Pod: watson-nlp-container-557d9fcf68-wm4vp

Result of the Watson NLP API request:

http://localhost:8080/v1/watson.runtime.nlp.v1/NlpService/SyntaxPredict

{"text":"This is a test sentence.", "producerId":{"name":"Izumo Text Processing", "version":"0.0.1"}, "tokens":[{"span":{"begin":0, "end":4, "text":"This"}, "lemma":"", "partOfSpeech":"POS_UNSET", "dependency":null, "features":[]}, {"span":{"begin":5, "end":7, "text":"is"}, "lemma":"", "partOfSpeech":"POS_UNSET", "dependency":null, "features":[]}, {"span":{"begin":8, "end":9,

...

EXTERNAL_IP: XXX

Verify invocation of Watson NLP API from the local machine:

{"text":"This is a test sentence.", "producerId":{"name":"Izumo Text Processing", "version":"0.0.1"}, "tokens":[{"span":{"begin":0, "end":4, "text":"This"}, "lemma":"", "partOfSpeech":"POS_UNSET", "dependency":null, "features":[]}, {"span":{"begin":5, "end":7, "text":"is"}, "lemma":"", "partOfSpeech":"POS_UNSET", "dependency":null, "features":[]}, {"span":

....

5. Summary

Compared to the blog post “Run Watson NLP for Embed on IBM Cloud Code Engine” you need to understand more related to containers, Kubernetes, Virtual Private Cloud and the cloud provider. Overall it is awesome that Watson Natural Language Processing Library for Embed is a containerized implementation and you can run it anywhere.

I hope this was useful to you and let’s see what’s next?

Greetings,

Thomas

#ibmcloud, #watsonnlp, #ai, #bashscripting, #kubernetes, #container, #vpc